HTTP 200 OK

Allow: GET, POST, HEAD, OPTIONS

Content-Type: application/json

Vary: Accept

[

{

"id": 23,

"title": "lndicVoices",

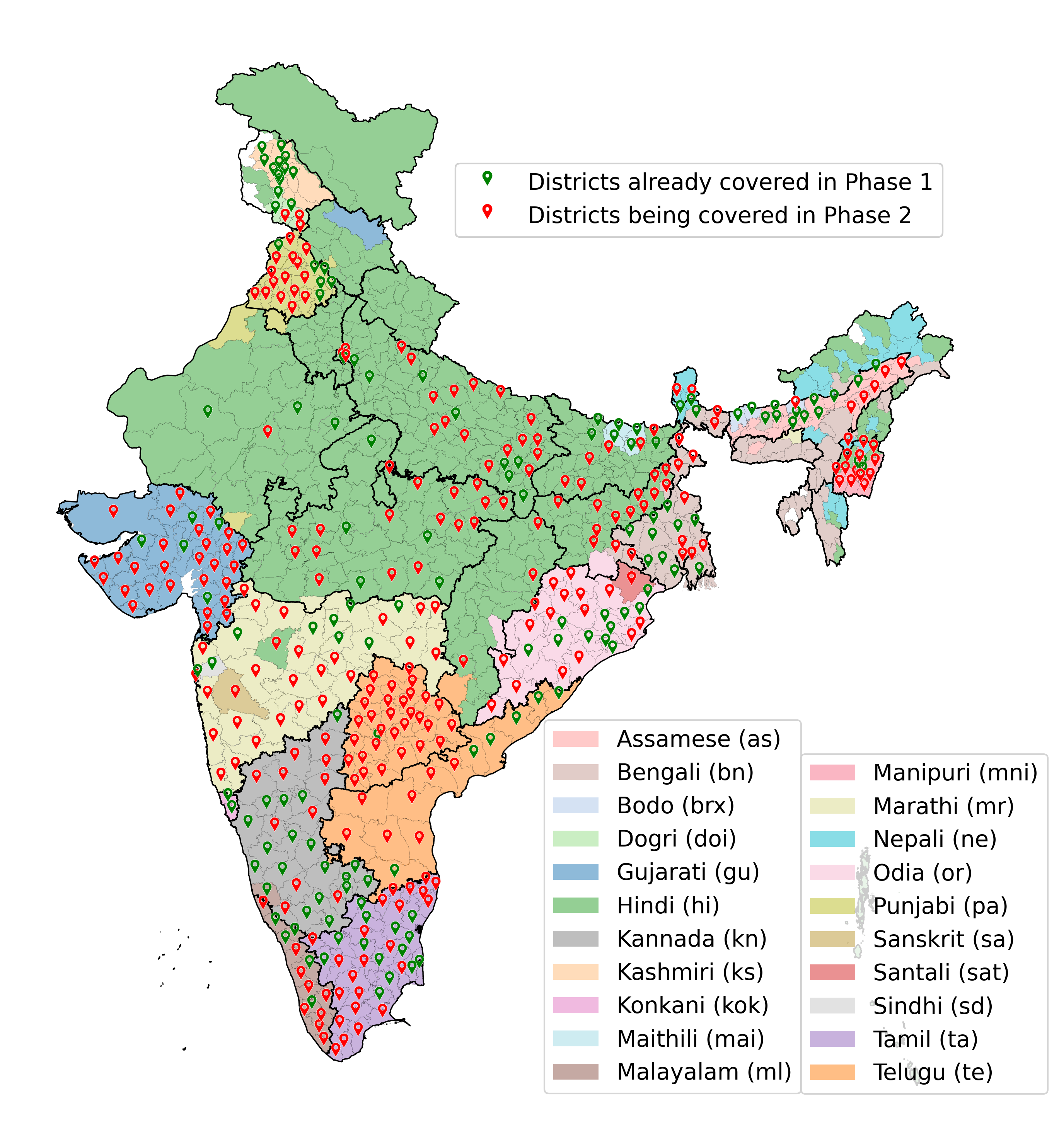

"description": "A monumental journey across India collecting 7,348 hours of spontaneous speech data from 16,237 speakers across 145 districts and 22 languages, funded by Bhashini under the Ministry of Electronics and Information Technology, Government of India.",

"published_on": "2025-10-06",

"image": null,

"related_link": "",

"markdown_content": "# IndicVoices: The Journey\\r\\n\\r\\nWelcome to the incredible journey of **IndicVoices**, a monumental endeavor funded by Bhashini, under the Ministry of Electronics and Information Technology, Government of India, and generously supported by Ekstep Foundation and Nilekani Philanthropies. Our ambitious mission? To collect spontaneous speech data across the rich tapestry of Indian languages, while honoring the vast linguistic, cultural, and demographic diversity that India boasts.\\r\\n\\r\\nThis quest took us on an exhilarating adventure across the country, from the snowy peaks of the north to the sun-kissed shores of the south, amassing a staggering **7,348 hours** of read, extempore, and conversational audio from **16,237 speakers**, spanning **145 Indian districts** and **22 languages**. The journey has just begun and we are committed to our goal of capturing ~17,000 hours of voice data across more than 400 districts in India.\\r\\n\\r\\nThe scale of this project was nothing short of epic, involving a dedicated army of **1,893 individuals**, including language experts, local mobilizers, coordinators, quality control experts, transcribers, language leads, and project managers. As we embarked on this ambitious journey, we didn't just collect data; we collected stories, laughter, and the myriad voices of India, making IndicVoices not just a project but a life-changing expedition for everyone involved.\\r\\n\\r\\nJoin us as we share some of the most unforgettable moments and experiences from this journey, offering a glimpse into the heart and soul of India through the voices of its people.\\r\\n\\r\\n## Humble Beginnings in the Holy City of Madurai\\r\\n\\r\\nOur journey commenced in **Madurai, Tamil Nadu**, famous for the Meenakshi Amman Temple. Seeking blessings from the Devi, we commenced our journey with high hopes and a clear vision. However, this pilot quickly became a reality check, challenging our assumptions at every turn, especially regarding participant mobilization.\\r\\n\\r\\nDespite the dense population of India, finding willing participants became unexpectedly difficult. **Trust was a major hurdle**; many were hesitant to share personal information during registration, fearing potential fraud, especially when digital transactions were mentioned. This skepticism slowed down the mobilization process significantly, making it challenging to achieve the desired diversity in age and gender ratios.\\r\\n\\r\\nAdditionally, the time commitment required from participants—sometimes extending up to **four hours** to complete the recording process—added another layer of complexity. This duration, much longer than anticipated, tested the patience and commitment of our participants. Hesitancy in speaking freely was another obstacle; many participants showed reluctance in opening up, leading to numerous retakes to capture responses that were natural and usable. This reluctance often resulted in responses that lacked depth and spontaneity, necessitating multiple attempts to elicit more meaningful dialogue. The culmination of these challenges not only extended the duration of our pilot but also highlighted the importance of building trust and ensuring clarity in communication to facilitate smoother data collection processes in the diverse linguistic landscape of India.\\r\\n\\r\\n\\r\\n\\r\\n## The Forgotten Generation\\r\\n\\r\\nRight from the beginning, we were very clear that we wanted sufficient participants from the **senior citizen age group** to capture their rich life experiences and tap into their repository of knowledge about Indian customs, traditions, and beliefs. However, addressing the underrepresentation of the senior demographic emerged as a significant hurdle.\\r\\n\\r\\nThe limited presence of individuals aged **60 and above** necessitated a reevaluation of our outreach efforts. Engaging with old age homes, senior citizen clubs, and conducting home visits became essential strategies to include this vital segment of the population. This challenge highlighted the importance of inclusivity and the need for tailored approaches to ensure diverse demographic participation.\\r\\n\\r\\nOur concerted efforts to involve senior citizens bore fruit in an unexpectedly delightful way, particularly notable in the diverse regions of India. A striking example of this success was observed in **Jammu**, where the older population displayed remarkable enthusiasm towards contributing to the project. This enthusiasm stemmed not just from their fluency in their native language (Dogri, in this case) but also from a deep-seated urge to preserve and pass on their linguistic heritage. Contrary to the challenges faced with other age groups and languages, senior citizens in Jammu and beyond became invaluable participants. Their eagerness to contribute not only enriched our dataset with authentic, nuanced language use but also underscored the critical role of senior citizens in safeguarding linguistic diversity.\\r\\n\\r\\n\\r\\n\\r\\n## The Need for a Guiding Hand\\r\\n\\r\\nIn the initial pilots, our fears about 'What if people don't speak' were confirmed. We learned the hard way that eliciting fluent speech with good quality content from individuals in interactions with strangers posed an intriguing challenge.\\r\\n\\r\\nRecognizing the pivotal role of participants' comfort in encouraging natural dialogue, we acknowledged the importance of assigning **dedicated coordinators** to accompany participants throughout the data collection process. Through targeted training, these coordinators were equipped with effective communication skills to engage participants authentically. Consequently, we established a procedural framework to ensure coordinators' proficiency in guiding participants through the data collection procedure.\\r\\n\\r\\nThe coordinators also helped the participants in overcoming technical challenges in installing the app, getting accustomed to the **\\\"record-verify-submit\\\" workflow** on the app, and clarifying the expectation with respect to each microtask.\\r\\n\\r\\n\\r\\n\\r\\n## Back to the Drawing Board\\r\\n\\r\\nAfter these initial pilots, we took a pause to critically reassess our data collection strategy. Insights from our initial pilots revealed that participants often provided repetitive answers, and everyday conversations failed to yield a diverse vocabulary and had very little coverage of names, numbers, entities, and brand names critical for downstream **Automatic Speech Recognition (ASR)** applications.\\r\\n\\r\\nThe pilots also offered us a glimpse into people's interactions with technology and their expectations from it, such as placing orders or completing digital transactions. We also realized that simple prompts like \\\"talk about politics\\\" fell short, and sparking a meaningful dialogue between strangers over a phone call proved challenging, often resulting in mere exchanges of pleasantries without covering a wide array of topics.\\r\\n\\r\\nRecognizing these gaps, we returned to the drawing board for an in-depth pre-collection phase. Our goal was to collect sentences with rich vocabulary, craft engaging questions spanning various domains, and create scenarios that simulate everyday digital interactions. We also refined our process to include tasks that would elicit responses rich in sequences of numbers, named entities, locations, dates, etc., and add role-play scenarios with detailed narratives to encourage dynamic conversations between two parties.\\r\\n\\r\\n## Weather Plays Spoilsport\\r\\n\\r\\n\\r\\n\\r\\nAfter refining our approach, we headed to the extreme north of India, to the beautiful land of **Kashmir**. Excited as we were, we encountered the formidable challenge of the region's harsh winter. Starting our pilot in mid-November in Srinagar, we were keenly aware of the narrow operational window before the onset of heavy snowfall, which renders data collection nearly impossible from December to February.\\r\\n\\r\\nThe unique weather conditions and the limited daylight hours significantly restricted our daily operations, allowing us only a brief period between **10 a.m. and 5:30 p.m.** for recordings. This time constraint meant that each coordinator could only manage sessions with a maximum of two participants per day, highlighting the need for a more adaptable approach to meet our productivity and deadline goals.\\r\\n\\r\\nAs we moved forward, it became crucial to innovate our data collection methods, shifting towards conducting recordings within the warmth and accessibility of participants' homes in the subsequent districts. This was a good learning for us and an early realization that given the diverse geographical landscape of India, we will have to be mindful of weather conditions: be it self-imposed afternoon curfews during summer in West Bengal, mobility issues during monsoon in Kerala and Goa, floods in Northeast India, harsh winters in the northern parts of Punjab, Delhi, Kashmir, Jammu, and so on. On a lighter note, while working on INDICVOICES, we have become experts on weather conditions in different parts of the country.\\r\\n\\r\\n## A Journey to the Remotest Parts of India\\r\\n\\r\\nAfter covering Kashmir (Kashmiri) and Jammu (Dogri), our journey took us to the north-eastern parts of India to conduct pilot studies covering Assamese, Bodo, Manipuri, and Nepali. Initially filled with enthusiasm, our venture into Assam's relatively tranquil settings soon led us into the more secluded and challenging terrains of Bodoland.\\r\\n\\r\\nHere, the sparse population and limited access to resources questioned the feasibility of our project, presenting a stark contrast to our prior experiences. Bodoland, with its serene yet isolated landscape of traditional tribal huts and quiet, dimly lit pathways, intimidating silence with no vehicular noise offered a unique set of challenges. The initial low turnout at our designated collection site in Kokrajhar prompted us to rethink our approach to engaging with remote tribal communities. Their concerns over privacy and the openness to share opinions reminded us of the delicate balance required to ensure inclusivity while being mindful of socio-political or geographical hurdles.\\r\\n\\r\\nOur journey further led us to **Kalimpong in West Bengal**, a region where the linguistic landscape shifts dramatically to predominantly Nepali speakers, diverging significantly from the Bengali culture of the state. This diversity within a single state highlighted the intricate patchwork of India's linguistic heritage. In response, we tailored our data collection approach, creating district-specific hints to engage participants in a manner that resonated with their unique linguistic identity.\\r\\n\\r\\nFollowing this, the endeavor in **Imphal West, Manipur**, introduced us to a different set of challenges marked by remoteness and the complexities of operating amidst constant disruptions. The initiation of the pilot in the compact confines of a local hotel was just the beginning of a journey punctuated by curfews, riots, and internet shutdowns, which significantly delayed our progress. The decision to romanize text data to include older participants unfamiliar with the local script, and continuing annotation work offline during internet shutdowns, were necessary to adapt to the ever-changing conditions in the state.\\r\\n\\r\\nThis expedition across the remote parts of India was not just a journey through diverse geographical landscapes but a deep dive into the heart of India's linguistic diversity. Each region, with its unique challenges, taught us the importance of resilience, adaptability, and the profound value of including voices from every region of the country, no matter how remote.\\r\\n\\r\\n\\r\\n\\r\\n## The Rural Urban Divide\\r\\n\\r\\nFollowing this, we did some pilots in rural districts in West Bengal covering two languages, Bengali and Santali. This illuminated the stark urban-rural divide, presenting unique challenges and learning opportunities at every turn. As we ventured into the tribal regions to engage with Santali-speaking communities, the serene yet complex rural setting offered a vivid contrast to the bustling urban environments we had previously navigated.\\r\\n\\r\\nConducting recording sessions in the midst of forests, under the canopy of trees, or in the humble backyards of huts, we were confronted with the realities of rural life: limited internet connectivity, the scarcity of participants fitting specific demographic profiles, unpredictable weather, and the reticence of women to participate.\\r\\n\\r\\n\\r\\n\\r\\nThese experiences shed light on the significant divide between urban and rural contexts, especially in terms of technological accessibility and the relevance of certain questions and use cases. It became apparent that some of our initial questions, designed with an urban mindset, were not resonant with the daily experiences of rural participants. For instance, the concept of **\\\"hailing a cab\\\"** was alien to many, revealing a disconnect in the applicability of our queries. This insight prompted us to revisit our approach and make our questions more closely aligned with the realities of rural life. We modified scenarios to involve \\\"arranging for transport for cattle or food grains,\\\" among other adjustments, ensuring our questions and use-cases were relevant and relatable to the lives of our rural participants.\\r\\n\\r\\nThis re-calibration was not merely about changing the wording of questions but about cultural and contextual sensitivity in linguistic data collection. This journey through the contrasting landscapes of India reinforced the notion that to ensure inclusivity we need to constantly change our assumptions, adapt, and improve our processes.\\r\\n\\r\\n## The Silence Amidst the Noise\\r\\n\\r\\nWhile rural areas offered a raw and unfiltered glimpse into India's linguistic diversity, urban settings introduced a different set of challenges. In bustling metropolises like **Mumbai**, the search for tranquil venues for recording sessions became a Herculean task, particularly in densely populated areas.\\r\\n\\r\\nThe urban clamor and the scarcity of quiet spaces escalated the costs and complexities of data collection, underscoring the logistical hurdles unique to urban centers. Moreover, the enthusiasm for participating in data collection efforts was noticeably muted among the urban populace, especially among professionals leading busy lives. This apathy necessitated innovative mobilization strategies to engage a demographic that seemed distant from the cause. Despite these obstacles, the endeavor to capture the linguistic essence of India's urban centers was as crucial as that of its rural counterparts. Overcoming this silence and capturing voices amidst the noise became an essential part of our mission.\\r\\n\\r\\n## India, a Land of Many Festivals\\r\\n\\r\\nNavigating the vibrant maze of India's festivals proved to be one of the most colorful challenges in our data collection journey. In a country where each region celebrates its own set of festivals with fervor and devotion, scheduling work around these celebrations was akin to finding a needle in a haystack of holidays.\\r\\n\\r\\nFrom **Durga Puja** in West Bengal and Odisha, **Ramzaan** in Kashmir, **Bihu** and **Pongal** across other parts, to the universally celebrated **Diwali**, our calendar was a mosaic of cultural festivities.\\r\\n\\r\\nThe complexity of scheduling was humorously encapsulated in a conversation with one of our partners, which turned into a comedic back-and-forth of date dodging:\\r\\n\\r\\n- **Partner:** We can't start in May as it is too hot that time of the year!\\r\\n- **We:** Ok, let's start in June then.\\r\\n- **Partner:** No, that would be difficult due to the monsoon season. Both June and July would be washed out, quite literally!\\r\\n- **We:** That looks bad. Then we should definitely start in August.\\r\\n- **Partner:** But then we would have Ganesh Chaturthi which is a very important festival here. No participants would turn up during that time.\\r\\n- **We:** Phew, what about September?\\r\\n- **Partner:** Schools and colleges will have exams so we will not be able to use them as venues (other options would be expensive).\\r\\n- **We (frustrated):** Okay, then I guess after that we would have to wait for Dusshera (October), Diwali (November), Christmas and New Year (December) to also pass by.\\r\\n- **Partner (with a straight face):** Yes, that would be ideal!\\r\\n\\r\\nThis humorous exchange underscored a significant reality of executing a project of this scale in India. It taught us the importance of flexibility, patience, and the ability to laugh at the seemingly impossible task of scheduling around the endless cycle of festivals. In the end, these challenges just became a part of our journey, making every successfully covered district feel like a festival in its own right.\\r\\n\\r\\n## Everything That Could Go Wrong\\r\\n\\r\\nAmidst all the celebrations, we soon realized that in a remote and distributed setup involving a large number of people, ensuring quality is a challenging task. Early in the pilot phases, we observed participants abruptly stopping mid-speech or drifting into unrelated conversations with coordinators without stopping the recording, leading to fragmented audio and content corruption.\\r\\n\\r\\nDespite comprehensive guidelines and thorough training, the human element introduced unpredictability in task execution, with participants sometimes simply reading the questions/prompts instead of answering/enacting them. It became very clear that we need to have an in-house QC team whose task would be to listen to every audio file collected on the ground and tag such errors. We iteratively refined our error categorization, adapting to new types of errors as they were discovered.\\r\\n\\r\\n\\r\\n\\r\\nIn their eagerness to assist participants, coordinators sometimes went above and beyond, inadvertently scripting entire responses or conversations. These were then merely read aloud by the participants, transforming what was supposed to be a spontaneous exchange into a rehearsed performance! This would diminish the authenticity of spontaneous speech. To combat these issues and preserve data integrity, we introduced error categories like 'Bad extemporaneous,' and 'Book read,' so that such content could be tagged.\\r\\n\\r\\nSimilarly, in telephonic conversations, a tendency emerged for one participant to dominate, leading to minimal contributions from the other party, a phenomenon tagged as 'SST' (Single Speaker Talking) by our QA team. Categories like 'Stretching,' 'Repeating Content,' and 'Long Pauses' were introduced to counter verbosity and repetition, ensuring the recordings were content-rich.\\r\\n\\r\\nIn several places, the authenticity of participants' identities emerged as a significant challenge. Concerns were raised when the voice of a participant didn't seem to align with their reported age and/or gender, leading to discoveries of intentional misinformation or unintentional errors in registration. Some instances revealed inconsistency in voices under the same participant ID, hinting at multiple individuals sharing a single ID, while others showed the same voice across different IDs, indicating individuals masquerading as multiple participants.\\r\\n\\r\\nTo address these authenticity issues, we introduced a micro-task requiring participants to record a video stating basic information, allowing our QC team to verify age and gender visually. Disparities led to data rejection, while voice mismatches in audio samples triggered further scrutiny. Recognizing privacy concerns and cultural sensitivities, particularly among female participants reluctant to record videos, we offered alternatives like live verification through WhatsApp calls, conducted by female QC members, ensuring a respectful and secure verification process.\\r\\n\\r\\nData collection across diverse settings—outdoors, in public schools, small hotels, and participants' homes—brought the challenge of background noise interference. Distant ambient noises were less intrusive compared to the constant buzz of fans in closed spaces. Distinguishing between unavoidable natural background sounds and disruptive persistent noises was essential.\\r\\n\\r\\nAn unexpected challenge arose with the capture of highly expressive, albeit profane, reactions to daily frustrations, necessitating a balance between authenticity and appropriateness. This led to the creation of an 'Objectionable Content' category to carefully screen for hate speech or inappropriate content.\\r\\n\\r\\nThrough this iterative process of reviewing audio files our QA team came up with **23 error categories** which comprehensively captured everything that could go wrong!\\r\\n\\r\\n## The Subtle Art of Transcription\\r\\n\\r\\nWhile we continued on our journey across the country, little did we know that our greatest challenge lay not in the fieldwork, but in the nuanced art of transcription. Initially perceived as a straightforward task of converting speech to text, the complexity of transcribing the diverse speech styles of thousands of individuals soon became apparent.\\r\\n\\r\\nThe main issue was the difference between colloquially spoken language and standardized language found in textbooks. The former contains words which may not have any spellings in standard textbooks or dictionaries but still cannot be ignored, simply because this is how people talk! This required us to choose between the pure phonetic representation of speech resulting in non-standard spellings on one hand, and pure textbook representations which deviated from what was being said on the other.\\r\\n\\r\\nTo address this we ended up with a **two-level transcription approach** wherein the first level the transcribers were asked to transcribe verbatim without worrying about correctness of spellings (thus emphasizing only on phonetic fidelity). In the second level the transcribers were asked to standardize the transcription to convert the phonetically correct representations to nearest standard spellings in textbooks.\\r\\n\\r\\nThus the lazily spoken Hindi word **\\\"muje\\\"** would be transcribed verbatim as \\\"muje\\\" in Level 1 ensuring phonetic fidelity and then standardized to **\\\"mujhe\\\"** in Level 2 ensuring spelling accuracy.\\r\\n\\r\\nHowever, aligning all language experts and transcribers with this novel framework proved challenging. Many transcribers initially resisted typing verbatim spellings, feeling it betrayed the standard writing style. Through extensive discussions, iterative rounds of feedback, and a collaborative effort across language teams, we established a set of guidelines that balanced standardized systems with fidelity to the actual sound wave. This meticulous process underscored transcription not just as a task but as an art form, requiring a deep understanding of linguistic nuances, cultural context, and the delicate balance between preserving the integrity of spoken language and adhering to standard linguistic conventions.\\r\\n\\r\\n\\r\\n\\r\\n## Stories from the Heart of India\\r\\n\\r\\nDespite the hurdles, the journey was largely filled with numerous positive experiences. The diversity we encountered in weather, languages, and cultures was overwhelming, yet it filled our hearts with an indescribable warmth and a profound appreciation for India's rich cultural tapestry. The love and hospitality offered by the people, their eagerness to share their stories, and their enthusiasm for preserving their linguistic heritage were truly heartening. Our journey underscored the power of language as a bridge to understanding people, their cultures, and the nation at a deeper level.\\r\\n\\r\\nIn a world where technology often seems to isolate us, our project brought people closer together, allowing them to connect and share their lives through their native languages. This technical endeavor became a conduit for genuine human connection, enabling people from various backgrounds to express their lives, traditions, and experiences.\\r\\n\\r\\nFrom a Tamil Nadu entrepreneur sharing her journey to success, to a Kashmiri woman finding solace in prayer during tumultuous times; from a young boy in Kashmir divulging his secret recipe, to a Manipuri girl aspiring for higher education amidst challenges, each story added a unique voice to the rich mosaic of IndicVoices.\\r\\n\\r\\nThe diversity in stories we collected — ranging from personal achievements and cultural narratives to expressions of socio-political concerns — highlighted the importance of the dataset we were building. Whether it was an old man reminiscing about life seventy years ago, a professor discussing the significance of a local folk culture, or a young Nepali girl playfully sharing tales of her dates, these narratives painted a vivid picture of the diverse life across India. Such stories not only enrich our understanding but also celebrate the myriad facets of Indian life, from its challenges to its triumphs.\\r\\n\\r\\n## Miles to Go\\r\\n\\r\\nDespite the vast disparities in lifestyle, language, and social circumstances across different regions, a common thread of respect for linguistic heritage and a passion for technology united participants from all walks of life. This shared enthusiasm underscores a collective commitment to preserving India's linguistic diversity, bridging the gap between tradition and modernity.\\r\\n\\r\\nIndicVoices, thus, stands as a testament to the enduring spirit of India and its people, weaving together the voices of its many inhabitants into a vibrant tapestry of stories that resonate with authenticity, diversity, and a profound sense of belonging.\\r\\n\\r\\nYet, as extensive as our journey has been, it is but a chapter in a much larger story. With over **12,000 hours of recordings** still ahead, our expedition through the heart of India's linguistic landscape is far from over. Like the timeless verse, \\\"miles to go before I sleep,\\\" our path stretches onward, promising more voices to be heard, more stories to be shared, and an ever-deepening appreciation for the rich mosaic of Indian culture and language.\\r\\n\\r\\nThis journey has only just begun, and the road ahead is filled with the promise of discovery, understanding, and the celebration of India's incredible diversity.\\r\\n\\r\\n",

"cover_image": {

"src": "https://shoonyastorageproduction.blob.core.windows.net/ai4bwebsite/indicvoices4.png"

},

"authors": null,

"affiliations": null,

"publication_links": null,

"sections": null,

"team": null,

"bibtex": "",

"page_url": "indic-voices"

},

{

"id": 27,

"title": "Indic LLM Suite",

"description": "The advent of Large Language Models (LLMs), especially ChatGPT, marked a revolutionary leap in how a layperson can interact with and engage with AI. It brought the power of NLP and intelligence into the hands of the masses by transforming interactions from command-based exchanges to conversational dialogues.",

"published_on": "2024-03-14",

"image": "https://admin.models.ai4bharat.org/media/images/Indic%20LLM%20Suite/indicllmsuite3.png",

"related_link": null,

"markdown_content": "",

"cover_image": null,

"authors": [

{

"name": "Mohammed Safi Ur Rahman Khan"

},

{

"name": "Mitesh M. Khapra"

}

],

"affiliations": null,

"publication_links": [

{

"text": "ArXiv",

"url": "https://arxiv.org/abs/2403.06350",

"icon": "arxiv"

},

{

"text": "GitHub",

"url": "https://github.com/AI4Bharat/IndicLLMSuite",

"icon": "github"

},

{

"text": "Hugging Face",

"url": "https://huggingface.co/collections/ai4bharat/indicllmsuite-65ee7d225c337fcfa0991707",

"icon": "huggingface"

}

],

"sections": [

{

"type": "markdown",

"heading": "What are some of the developments in the Indic-LLM World?",

"heading_level": "h1",

"content": "The advent of Large Language Models (LLMs), especially ChatGPT, marked a revolutionary leap in how a layperson can interact with and engage with AI. It brought the power of NLP and intelligence into the hands of the masses by transforming interactions from command-based exchanges to conversational dialogues.\n\nNow, while English-speaking users have experienced their \"ChatGPT moment,\" India eagerly awaits its turn. Despite being a nation of great linguistic diversity and vibrant cultural heritage, technological advancements (like ChatGPT) remain largely inaccessible to most of its populace. Large Language Models (LLMs) can revolutionize the lives of millions by removing the barriers for those who are technologically unaware. An India-centric LLM could democratize access to information, services, and opportunities by enabling interactions in local languages. A case in point is the [Jugalbandi bot](https://news.microsoft.com/source/asia/features/with-help-from-next-generation-ai-indian-villagers-gain-easier-access-to-government-services/) and the [PM Kissan bot](https://indiaai.gov.in/article/exploring-pradhan-mantri-kisan-ai-chatbot). It would be a leap towards inclusivity, ensuring that the benefits of these models are not confined to an educated elite."

},

{

"type": "markdown",

"heading": "The Journey Begins",

"heading_level": "h2",

"content": "The journey for IndicLLMs began with the launch of [IndicBERT](https://ai4bharat.iitm.ac.in/indicbert/) in 2020, which has since amassed over 400K downloads from [Hugging Face](https://huggingface.co/ai4bharat/indic-bert). IndicBERT was primarily focused on Natural Language Understanding (NLU), while [IndicBART](https://ai4bharat.iitm.ac.in/indicbart/), introduced in 2021, aimed to cater to Natural Language Generation. Both models were pretrained from scratch, utilizing the limited data and model scale available, thanks to generous grants from EkStep Foundation and Nilekani Philanthropies.\n\nHowever, with the introduction of large open models like Llama, Llama-2, and Mistral, the emphasis has shifted towards adapting these models for Indic languages. Various initiatives have been undertaken to develop both Base and Chat models for different languages, including [OpenHathi (Base)](https://www.sarvam.ai/blog/announcing-openhathi-series), [Airavata (Chat)](https://ai4bharat.github.io/airavata/), [Gajendra-v0.1 (Chat)](https://huggingface.co/BhabhaAI/Gajendra-v0.1), [Kan-LLaMA](https://huggingface.co/collections/Tensoic/kan-llama-llama-659ff51a80ac3d5554fa2cb7), [odia_llama2](https://huggingface.co/OdiaGenAI/odia_llama2_7B_v1), [tamil_llama](https://huggingface.co/abhinand/tamil-llama-7b-base-v0.1), [among others](https://github.com/OdiaGenAI/Indic_LLM_Resource_Catalog)."

},

{

"type": "markdown",

"heading": "Current Challenges",

"heading_level": "h2",

"content": "The approach these models employ involves building on top of the existing pretrained English-only models (typically Llama-2), by adapting them for Indic languages. This adaptation process includes extending the tokenizer and the embedding layer, followed by one or more rounds of continual pretraining, using data from existing multilingual corpora like mc4, OSCAR, Roots, etc., to develop the Base models. When it comes to creating Chat models, the process typically involves finetuning the various Base models (both English and Indic) using translated versions of existing English datasets like Dolly, OpenAssistant, UltraChat, etc.\n\nWhile these models often demonstrate reasonably good language generation capabilities, they still [fall short in factuality](https://ai4bharat.github.io/airavata/#:~:text=Examples%20where%20Airavata%20output%20has%20errors). This highlights one of the biggest open problems in building Indic models by adapting English models, i.e., the effective cross-lingual knowledge transfer from English."

},

{

"type": "markdown",

"heading": "Why Do We Need Indic-Only Models?",

"heading_level": "h2",

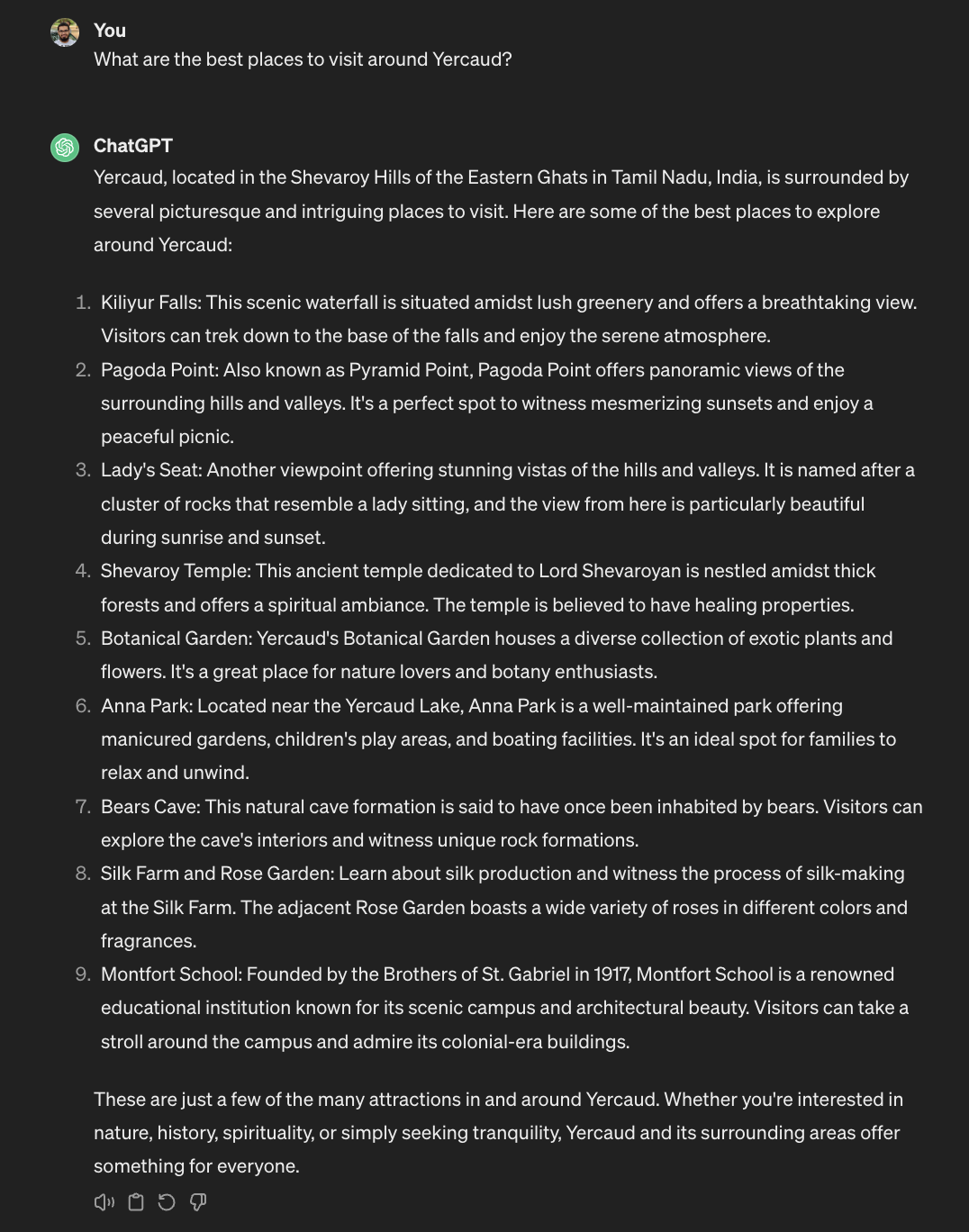

"content": "This raises the following question: If models like GPT-3.5 and GPT-4 can perform well in Indian languages, why do we need Indic-only models? Why not just use these models directly? Or consider the translate-test approach, where we first translate the prompt into English, get the output from the model, and then translate the answer back to the original language.\n\nThe answer to these questions is a multi-faceted one.... First, even though existing English models can produce decent Indic content, the real challenge lies in tokenization. Indic languages are not efficiently represented in these tokenizers. This results in the model generating [up to 4x more tokens](https://www.sarvam.ai/blog/announcing-openhathi-series#:~:text=Similar%20information%20content%20in%20English%20when%20expressed%20in%20Hindi%20has%20almost%203%2D4x%20higher%20tokens%20in%20the%20GPT%20and%20Llama%20tokenisers.) for conveying similar information content in Indic languages. Second, while these models perform well with high-resource Indian languages like Hindi, Telugu, Tamil, and Urdu, they struggle significantly with low-to-medium resource languages such as Oriya, Kashmiri, and Dogri. Third, the issue of hallucinations becomes more pronounced in Indic languages, and the accuracy of the answers decreases noticeably. For instance, Yercaud, a famous hill station town in Tamil Nadu, is recognized correctly by GPT-3.5 in English. However, when the same question is posed in Hindi and Telugu, the model's responses are wildly inaccurate, as shown in the Figure.\n\n\n\n\n\nLastly, even in English, these models often fail to recognize and answer questions that are culturally nuanced. Below figure shows an example where ChatGPT struggles to answer a cultural question even in English.\n\n"

},

{

"type": "markdown",

"heading": "What is the way forward?",

"heading_level": "h1",

"content": "The above issues highlight the need for a good open-source \"Indian LLM.\" Note that we use the term \"Indian\" rather than \"Indic\". This distinction is not just semantic; it's foundational to the vision for what such a model should achieve. While generation in \"Indic\" languages is a valuable goal, it barely scratches the surface of what's required. India, with its rich mixture of cultures, languages, and dialects, demands a model that is more than just multilingual. We need a model that's truly \"multicultural\" in its understanding and generation capabilities.\n\nAn \"Indian\" LLM, while being linguistically versatile, should also comprehend the Indian context - capturing the nuances of various cultural idioms, historical references, regional specifics, and, most importantly, the variety of ways in which the diverse Indian population interacts. It should cater to even the remotest Indian user, recognizing her unique questions, concerns, conversational contexts, etc. The objective, therefore, extends beyond mere language understanding or generation; it's about building a model that can act as a digital knowledge companion for the Indian user."

},

{

"type": "markdown",

"heading": "Pre-training",

"heading_level": "h2",

"content": "Pretraining data sets the stage for a model's general understanding of language, context, and world knowledge. While the sheer volume of data is important, it alone doesn't guarantee success. For an \"Indian\" LLM, the data must be high-quality, clean, and exhibit a rich diversity that mirrors India's cultural, linguistic, and social fabric. This means curating texts in \"all\" Indian languages and ensuring these texts span the vast diversity — from news articles and literature to posts and personal narratives. It should be rich in cultural references, local knowledge, and the everyday realities of life in India (from \"Janab\" in Lucknow, \"Dada\" in Kolkata, \"Bhai\" in Mumbai, \"Miyan\" in Hyderabad, all the way to \"Anna\" in Chennai).\n\nThis data should have temporal diversity - from historical texts and perspectives to contemporary content (from the birth of sage Pulastya to the rise of Shubhman Gill). The curation process must aim for a balance that allows the model to develop an understanding that is broad yet firmly anchored in the Indian ethos."

},

{

"type": "markdown",

"heading": "Instruction Fine-tuning Data",

"heading_level": "h2",

"content": "Building upon the foundation laid by pre-training, Instruction Fine-tuning plays a key role in refining the model's abilities by teaching it to respond accurately and relevantly to the user's prompts. This phase is essential for ensuring the model's outputs are aligned to the users' specific requirements and conversational preferences.\n\nThe ideal instruction fine-tuning data set should encompass prompts and responses across all Indic languages, addressing the full spectrum of conversations and inquiries one might encounter in India. This includes everything from everyday casual conversations to formal discourse and from straightforward queries to complex reasoning questions. It's important to capture the myriad ways in which people across different regions of India pose questions, considering local idioms, slang, and the often-seen mix of languages in daily speech.\n\nIn summary, the ideal instruction fine-tuning data should be able to prepare the LLM for handling all the different user intentions and the real-world interactions that define the daily lives of Indians.... The essence is to capture \"Everything Indians might ask\" and provide responses in \"Every way Indians expect to receive them\"."

},

{

"type": "markdown",

"heading": "Toxic Alignment Data",

"heading_level": "h3",

"content": "Aligning chat models to responsibly handle toxic prompts and queries from the user is crucial to developing ethically responsible models. When asked a question or command that is toxic in nature, the model should refrain from answering, ideally also providing an appropriate reason for it. This alignment is crucial to ensure that the model does not amplify harmful biases, stereotypes, or abusive language.\n\nGiven the diverse linguistic, cultural and social landscape of India, aligning the models appropriately for toxic content becomes a big challenge. Since the nuances of language and culture can significantly vary from one region to another, identifying and addressing toxic content requires a carefully thought out and planned approach.\n\nThe ideal toxic alignment data for Indian LLM must encompass a wide range of prompts and refusals/reasoning in all Indic languages, capturing the various forms of toxic content that users might input. This includes different types of toxicity and severity levels, from direct abuse to more subtle forms of toxicity that may be contextually or culturally specific. The main challenge comes in while trying to account for the diverse linguistic characteristics of India, where something considered toxic in one language or region may be perfectly normal in another. Let’s compare English and Hindi for instance - calling someone an owl in English is often taken in the positive light as a praise like “He is a wise owl”, whereas calling someone an owl (or “उल्लू”) is offensive meaning that he is foolish. Aligning the models to handle such ambiguity with grace is therefore a very interesting challenge.\n\nHence, by incorporating a comprehensive and culturally sensitive toxic alignment data, the model will be better equipped to contribute positively to interactions, making it a safer and more inclusive tool for the Indian populace."

},

{

"type": "markdown",

"heading": "The Blueprint for creating this data and our initial efforts",

"heading_level": "h1",

"content": "Given the above challenges and fairly rich wishlist, it's crucial to define a clear and strategic blueprint for this. In this section we describe what we think is a good blueprint for creating, curating and refining the data for training an \"Indian LLM\", as well as the initial efforts we have undertaken to create the \"IndicLLMSuite\"."

},

{

"type": "markdown",

"heading": "Pre-training: Sangraha Corpus",

"heading_level": "h2",

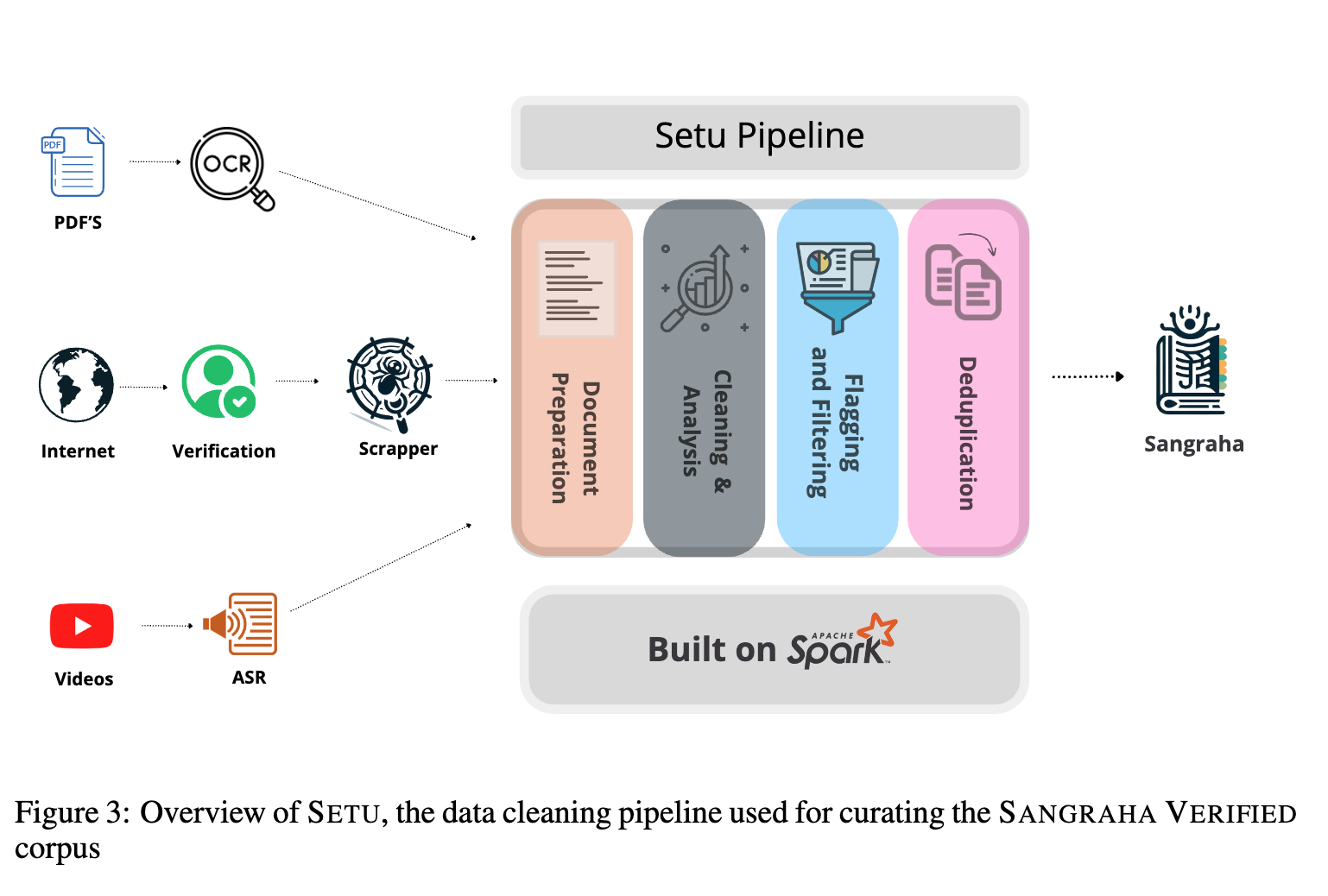

"content": "We introduce the Sangraha corpus, the largest pre-training corpus for Indian languages. Sangraha has three main splits - Sangraha Verified, Sangraha Synthetic and Sangraha Unverified."

},

{

"type": "table",

"heading": "Sangraha Corpus Statistics by Language",

"heading_level": "h3",

"headers": [

"Language Code",

"Sangraha Verified",

"Sangraha Synthetic",

"Sangraha Unverified",

"Total Tokens (M)"

],

"rows": [

[

"asm",

"292.1",

"11,696.4",

"17.5",

"12,006.0"

],

[

"ben",

"10,604.4",

"13,814.1",

"5,608.8",

"30,027.5"

],

[

"brx",

"1.5",

"-",

"-",

"1.5"

],

[

"doi",

"0.06",

"-",

"-",

"0.06"

],

[

"eng",

"12,759.9",

"-",

"-",

"12,759.9"

],

[

"gom",

"10.1",

"-",

"-",

"10.1"

],

[

"guj",

"3,647.9",

"12,934.5",

"597.0",

"17,179.4"

],

[

"hin",

"12,617.3",

"9,578.7",

"12,348.3",

"34,544.3"

],

[

"kan",

"1,778.3",

"12,087.4",

"388.8",

"14,254.5"

],

[

"kas",

"0.5",

"-",

"-",

"0.5"

],

[

"mai",

"14.6",

"-",

"-",

"14.6"

],

[

"mal",

"2,730.8",

"13,130.0",

"547.8",

"16,408.6"

],

[

"mar",

"2,827.0",

"10,816.7",

"652.1",

"14,295.8"

],

[

"mni",

"7.4",

"-",

"-",

"7.4"

],

[

"npi",

"1,822.5",

"10,588.7",

"485.5",

"12,896.7"

],

[

"ori",

"1,177.1",

"11,338.0",

"23.7",

"12,538.8"

],

[

"pan",

"1,075.3",

"9,969.6",

"136.9",

"11,181.8"

],

[

"san",

"1,329.0",

"13,553.5",

"9.8",

"14,892.3"

],

[

"sat",

"0.3",

"-",

"-",

"0.3"

],

[

"snd",

"258.2",

"-",

"-",

"258.2"

],

[

"tam",

"3,985.1",

"11,859.3",

"1,515.9",

"17,360.3"

],

[

"urd",

"3,658.1",

"9,415.8",

"1,328.2",

"14,402.1"

],

[

"tel",

"3,706.8",

"11,924.5",

"647.4",

"16,278.7"

],

[

"**Total**",

"**64,306.1**",

"**162,707.9**",

"**24,307.7**",

"**251,321.0**"

]

]

},

{

"type": "markdown",

"heading": "Sangraha Verified",

"heading_level": "h2",

"content": "The web is undeniably the most prominent source of content for training LLMs and has been the go-to option for majority of the English LLMs, due to its vastness and easy accessibility. It offers an unparalleled range of textual data across domains, making it an invaluable source for collecting diverse linguistic data as well as world knowledge. There has been a prominent rise in English corpora curated from the web like C4, RefinedWeb, RedPajamav2, etc. In the case of non-English languages, there has been a steady rise (although much slower) with mC4, OSCAR, CulturaX, MADLAD-400, etc.\n\nOne prominent issue is the inherent noisiness of web-sourced data. The open nature of the web means that the quality of content varies drastically and is often riddled with unwanted content, such as, adult, biased, toxic content and poorly translated content. Given that the overall web content for Indic languages is very low, it is of utmost importance to ensure that the crawled content is of extremely high quality. Thus we introduce the process of human verification of each website as a part of our data collection pipeline. We release a total of 48B tokens of high quality verified web crawled content in all 22 scheduled Indian languages.\n\nDespite the expansive breadth of the web, the pool of high-quality Indian language data is finite and will soon be exhausted. This limitation stems from the narrower scope of content creation in these languages and the low concentration of high-quality sources. As LLMs are extremely data-hungry, relying solely on web content becomes unsustainable for an Indian LLM. Hence, exploring other avenues for collecting data that can supplement the web-sourced corpus becomes necessary.\n\nOne such avenue is the vast amount of content locked within PDFs. Numerous works, including government reports, educational material, religious texts, and literature, are predominantly available as documents often digitized in PDF formats. These documents are treasure troves of India's rich heritage, containing not just literary works but also comprehensive documents on traditions, practices, and historical accounts unique to different parts of the country. The significance of these documents extends beyond mere textual data; they serve as custodians of India's diverse cultural narratives, encapsulating knowledge that is often not available in web-friendly formats.\n\nWe download all the available Indian language PDFs from the Internet Archive, selecting high-quality documents through a detailed filtering process. Additionally, we collect documents from different government sources, including Parliamentary debates, magazines, textbooks, etc. We then performed OCR on them, using GCP's Vision tool, and released about 14.5B tokens of this data.\n\nSimilarly, a bulk of language data is present in audio forms in videos, podcasts, radio broadcasts, movies, etc. These audios are another massive source of culturally rich Indic language data. Moreover, this represents the most natural form of human interactions, which captures various things like dialects, slang, and other culturally relevant language usage that are often very difficult to find in web-sourced texts and books. For example, during the launch of Chandrayaan-3, the entire nation was delighted, and many wanted to express their thoughts. Hence we saw many videos and broadcasts uploaded onto YouTube where people expressed thoughts in their own language and style. Similarly, after India's defeat at the hands of Australia in the 2023 World Cup Final, many expressed their dismay in their most natural form - speech. Therefore, while extracting and transcribing this content is challenging, this opens up a whole new dimension for the model, making it more versatile.\n\nWe source movie subtitles in Indian languages from [OpenSubtitles](https://www.opensubtitles.org/en/search/subs) and collect other \"speech\" data from song lyrics, [Mann ki Baat](https://mib.gov.in/mann-ki-baat/), and [NPTEL](https://nptel.ac.in/) transcripts. These transcripts feature a substantial amount of cultural and technical text in Indian languages. Additionally, we download about 80K hours of high-quality verified \"Hindi\" videos from YouTube and transcribe them using the Hindi-conformer model. We release about 1.2B tokens of speech data as part of Sangraha."

},

{

"type": "markdown",

"heading": "Sangraha Synthetic",

"heading_level": "h3",

"content": "Despite these comprehensive efforts to diversify and enrich the data, a considerable portion of world knowledge and Indian content remains primarily available in English. One way to bridge this knowledge gap is via translation. We feel that translating all the knowledge-rich segments of English data, such as encyclopedias, textbooks, etc., to \"all\" Indian languages is necessary to make the Indian LLM proficient in general world knowledge.\n\nUtilizing IndicTrans2, we translated the entirety of English Wikimedia into 14 Indian languages, resulting in a total of nearly 90B tokens. Additionally, recognizing the prevalent trend of \"Romanized\" Indic language usage, particularly in informal settings and digital communication, we transliterate the above-translated Wikimedia content from the 14 languages to Roman script using IndicXlit, resulting in an additional 72B tokens."

},

{

"type": "markdown",

"heading": "Sangraha Unverified",

"heading_level": "h3",

"content": "While Sangraha Verified emphasizes \"human-verified\" data (across the formats), we acknowledge the practical challenges of scaling this effort. For this, we introduce the Sangraha Unverified split, via which we swiftly incorporate high-quality data from other existing collections (CulturaX and MADLAD-400 in our case). This method allows us to quickly expand our dataset with a wide range of texts, which, although not verified, still maintain the baseline quality standard of Sangraha Verified. We release about 24B tokens as part of Sangraha Unverified.\n\nThrough Sangraha, we're laying down a comprehensive framework for data curation that's mindful of the linguistic, cultural, and knowledge diversity of India. Each component of Sangraha — Verified, Unverified, and Synthetic — is designed with specific motivations and goals in mind. They collectively contribute to the creation of a data corpus that's truly representative of India. Along with Sangraha, we also release the \"Setu\" which is a data cleaning and synthesis pipeline, specially created to handle Indic language data from different modalities. Figure shows the overview of Setu. For technical details on the curation of Sangraha and Setu, we direct the readers towards our technical paper.\n\n\n\nWhile we still have a long way to go, this initial effort marks a significant step towards realizing an Indian LLM that's as diverse and dynamic as the population it aims to serve. The problem is far from being solved and we need a massive country-wide effort to collect every webpage of relevance, every video which has a unique story to share, every PDF which captures the wisdom of India and perhaps digitize every Indian language book in every library of India (we can come up with a clear framework to deal with copyright issues or simply follow the [Japanese guidelines on AI and copyright](https://www.privacyworld.blog/2024/03/japans-new-draft-guidelines-on-ai-and-copyright-is-it-really-ok-to-train-ai-using-pirated-materials))."

},

{

"type": "markdown",

"heading": "Instruction Fine-tuning: IndicAlign-Instruct",

"heading_level": "h2",

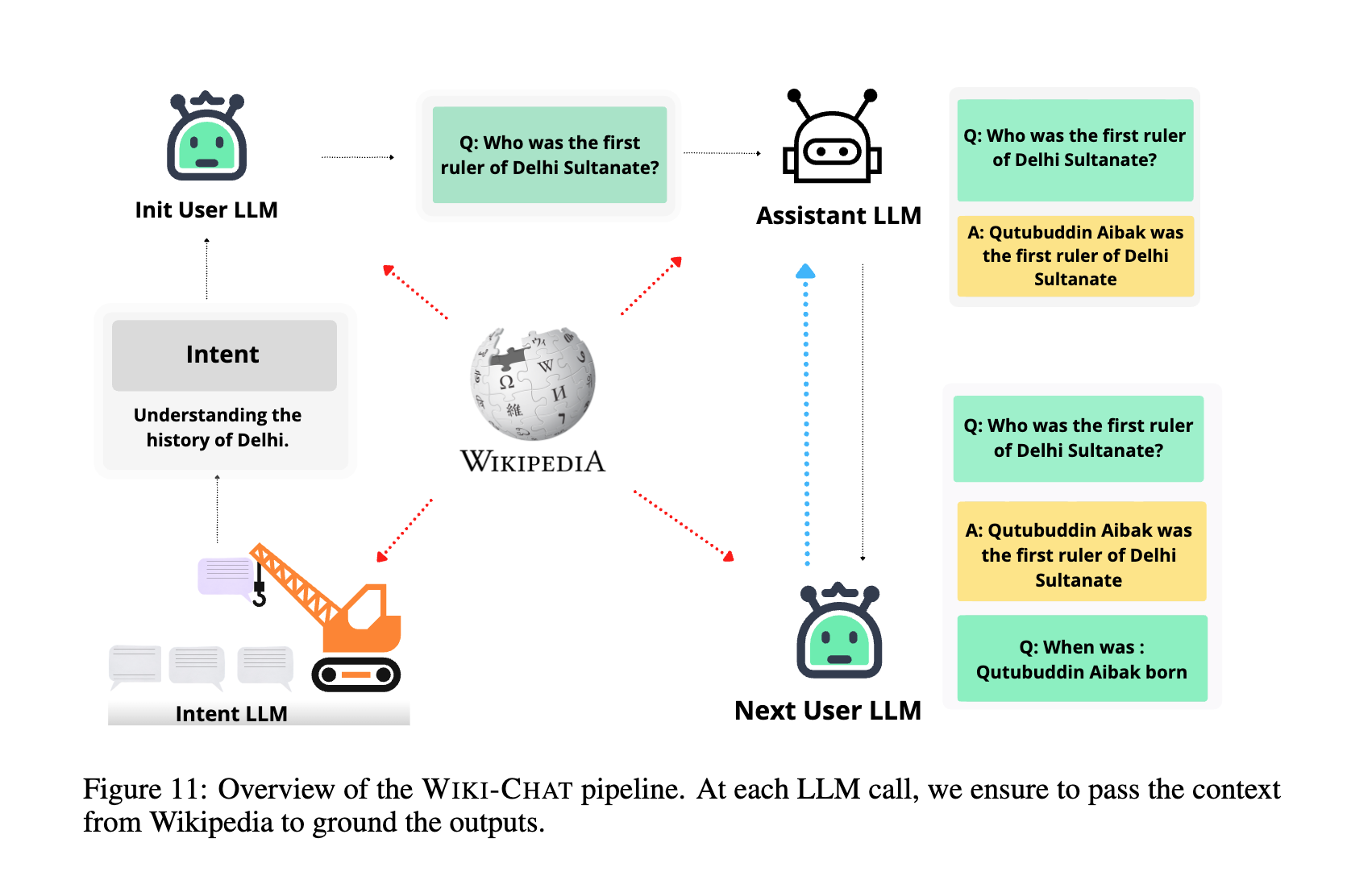

"content": "In the world of English LLMs, there are various ways in which Instruction Fine-tuning data is created. Replicating these methods for Indic languages is not directly possible. To start with, there are very few Indian language datasets available. Also, directly distilling Indic language data is not possible due to the absence of fully functional IndicLLMs. This creates a unique \"Chicken and Egg\" dilemma: without sufficient good-quality data, we cannot train effective models, and without effective models, generating the necessary data is difficult.\n\nWe attempt all the above three approaches to create the \"initial batch\" of Instruction Fine-tuning data for Indian LLMs. Firstly, we utilize IndoWordNet, a lexically rich but rather under-appreciated resource, to create around 74M instruction-response pairs for 18 languages. Next, we extend the existing Indic-LLM efforts and translate human-generated English datasets Dolly, OpenAssistant, and WikiHow to 14 Indian languages. Finally, we attempt to crowdsource around 43K conversations using our platform \"Anudesh.\" We pair these human-generated prompts with answers from open-sourced models by following the translate-test process - translating the prompts into English, getting the responses from an English LLM, and then translating those responses back into the Indic languages.\n\n\n\nWe direct the reader to our technical paper for more details about this data. We acknowledge that creating resources via translation is not at all ideal, but one has to start somewhere to break the chicken and egg problem."

},

{

"type": "markdown",

"heading": "Toxic Alignment: IndicAlign-Toxic",

"heading_level": "h2",

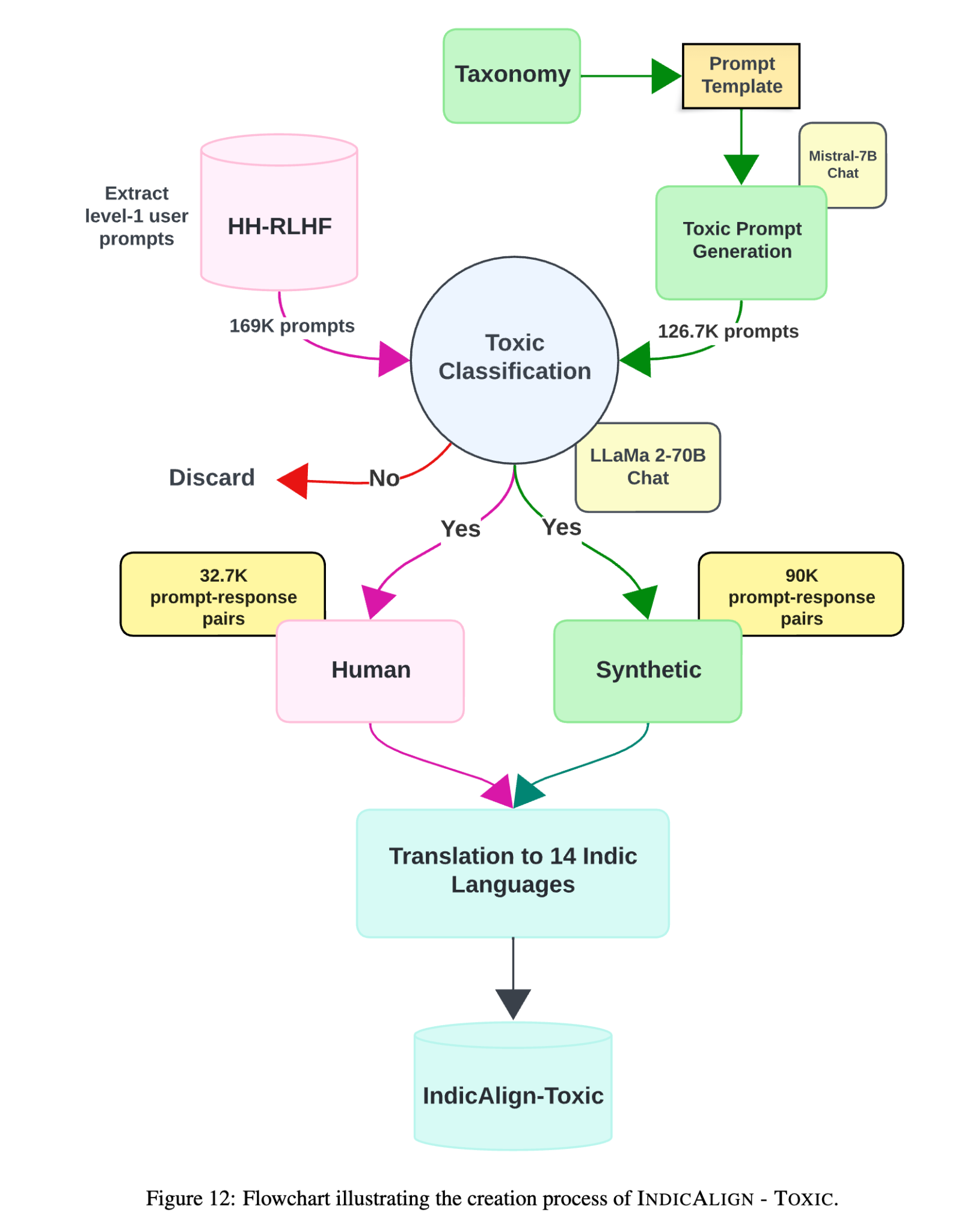

"content": "The process of aligning language models to navigate and mitigate toxic prompts in English remains largely opaque, with very few datasets being made public.... One of the primary hurdles in achieving toxic alignment for Indic languages is the lack of a robust framework for detecting toxic content across all Indic languages. The diversity of the languages and dialects in India, each with its own nuances and cultural contexts, complicates the development of a one-size-fits-all solution.\n\nAs the initial steps, we release two datasets for achieving a rudimentary toxic alignment for an Indian LLM. We use both human and synthetic data collection strategies and introduce two distinct datasets: \"HHRLHF-Translated\", comprising human-curated data, and \"Toxic Matrix\", a novel toxic alignment dataset created synthetically. We collect all the English toxic prompts from the HH-RLHF dataset and get their responses (i.e., refusals to answer the question with a reason) from open-source models. We then translate these pairs to 14 Indic languages to form HHRLHF-Translated. For Toxic Matrix, we first define a taxonomy of what a toxic prompt looks like in Indian contexts, having three main axes - Content Type, Target Group and Prompt Style. Then we use a combination of a relatively unaligned model (to generate toxic prompts using the taxonomy) and a well-aligned English model (to generate non-toxic responses or refusals). We then translate these prompt response pairs to 14 Indic languages. Figure shows the flow of the entire pipeline. For additional technical details, we direct the reader to our technical paper.\n\n"

},

{

"type": "markdown",

"heading": "The Path ahead and our Next Steps",

"heading_level": "h2",

"content": "**Pretraining Data**\n\nWe chart out the path ahead for curating a comprehensive pretraining data for an Indian LLM[1]. Here are the crucial next steps that we feel are extremely important to ensure the development of a truly comprehensive model:\n\n**Exhausting the \"Verified\" Web Content:** We need to crawl the entire web and scrape \"all\" the verified and high quality text available in \"all\" Indian languages. This involves both the process of discovery and gathering of content.\n\n**Mass Translation of other English Content:** Given the fact that majority of the \"world\" knowledge as well as \"Indian\" knowledge is still primarily available in English, we need to translate \"all\" of it to \"all\" Indian languages. This step will bridge the knowledge gap, ensuring that the Indian LLM benefits from global insights while serving local needs. This, of course, requires a parallel and equally important effort on improving MT systems for Indian languages.\n\n**Mass OCR on Indic and India-centric PDFs:** Despite our massive effort, there are still massive troves of undiscovered \"Indian\" PDF documents. Discovering and extracting text via OCR from these documents is essential for unlocking historical texts, cultural information, and India-specific knowledge.\n\n**Massive Digitization Efforts:** It's an undeniable fact that a majority of books and documents in India remain undigitized. A focused effort to digitize books and documents across India is not just ambitious; it is necessary. This massive digitization effort will ensure that no aspect of India's rich literary and cultural heritage is left behind in the digital era. \"Every document in every library and office in India must be digitized!\".\n\n**Development of Open-Source OCR Systems:** Relying on closed, licensed systems is not a viable option for an effort of this scale and significance. There's a pressing need for a robust, open-source OCR system for \"all\" Indian languages.\n\n**Mass Transcription Efforts:** The audio data publicly available in all Indian languages is a goldmine of spoken words, styles and dialects. Undertaking massive ASR transcription efforts is crucial. The entirety of Indian content available on YouTube and other public platforms must be discovered and transcribed.\n\n**Development of Open-Source ASR models and Normalization Systems:** Given the ambition to consume all spoken content on the web, good Open-source ASR models for \"all\" Indian languages are a minimum must. However, transcripts often come with irregularities, slang, and context-specific nuances that might not be well represented directly in textual format. Therefore, a good normalization module is also necessary, one that can consume the transcripts to produce clean, normalized text ready for pretraining."

},

{

"type": "markdown",

"heading": "The Path ahead and our Next Steps",

"heading_level": "h2",

"content": "We chart out the path ahead for curating a comprehensive pretraining data for an Indian LLM. Here are the crucial next steps that we feel are extremely important:\n\n**Pretraining Data:**\n- Exhausting the \"Verified\" Web Content: We need to crawl the entire web and scrape \"all\" the verified and high quality text available in \"all\" Indian languages.\n- Mass Translation of other English Content: Given the fact that majority of the \"world\" knowledge as well as \"Indian\" knowledge is still primarily available in English, we need to translate \"all\" of it to \"all\" Indian languages.\n- Mass OCR on Indic and India-centric PDFs: Despite our massive effort, there are still massive troves of undiscovered \"Indian\" PDF documents.\n- Massive Digitization Efforts: A focused effort to digitize books and documents across India is not just ambitious; it is necessary."

},

{

"type": "markdown",

"heading": "Instruction Fine-tuning",

"heading_level": "h2",

"content": "While the approach of translating English IFT data is a practical starting point, it's far from a perfect solution. Direct translations often miss the cultural and contextual nuances of how Indians ask questions, the specific contexts they refer to as well as the kind of response they expect. For instance, a straightforward translation of a dietary question might ignore regional dietary habits or local cuisine, leading to responses that are irrelevant to an Indian user. Consider a scenario where a user asks for a healthy breakfast option. Now, a model trained on translated data, might respond directly suggesting \"oatmeal,\" a common recommendation in Western contexts, whereas an Indian might expect suggestions like \"idli\" or \"poha,\" reflecting local dietary preferences. This disconnect underscores why translation alone cannot meet the nuanced demands of instruction fine-tuning for an Indian LLM.\n\nCrowdsourcing emerges as a viable path forward, yet it's not devoid of challenges. An examination of our initiative, Anudesh, reveals an extremely skewed participation, highlighting the need for inclusivity in crowdsourcing efforts. Ensuring representation across all languages, age groups, education levels, and regions is critical for capturing the full spectrum of Indian queries and expectations. But ultimately, the goal is to assemble \"gold\" standard prompt-response pairs, where both prompts and responses are sourced directly from humans.\n\nFollowing this, we announce our efforts of curating this data by following a structured approach rooted in a comprehensive taxonomy. We try to define all the different things someone can ask by organising them across four major axes: The prompt structure, intent, domain and the language. We hypothesize that covering all possible combinations of the above axes, will result in a dataset that can help us achieve the ideal goal, thereby ensuring that the Indian LLM can effectively serve the unique needs and expectations of its users."

},

{

"type": "markdown",

"heading": "Toxic Alignment",

"heading_level": "h2",

"content": "It's evident that we have a very challenging journey ahead in achieving ideal toxic alignment for Indic Languages. The drawbacks of translating English data are more pronounced in Toxic data. Derogatory terms and phrases simply do not have direct equivalents across languages. The lexicon of offensive language is often deeply rooted in various dialects and local contexts, which therefore vary dramatically from one region to another. Certain phrases that may seem innocent in English could carry a heavy load of cultural insensitivity or bias within Indian contexts. Similarly, a toxic phrase in English could translate into an innocent phrase in Hindi, which harms the alignment of the model.\n\nWe feel the way forward again involves creating a detailed taxonomy that categorizes toxic content not just by its severity but also by its cultural and contextual relevance across different Indian regions. This taxonomy will serve as a guide for crowdsourcing as well as manual curation efforts. Additionally, social media platforms, with their vast and dynamic exchange of ideas, can be invaluable sources for mining toxic content. These platforms often mirror the latest trends in language use, including the evolution of slang and the emergence of new forms of derogatory language.\n\nWe also highlight the need to develop good open-source toxic content detection models for Indian languages that are capable of understanding and interpreting content contextually. This means not just identifying offensive words but understanding the intent behind statements, the cultural context, and the potential for harm. Such models must be able to navigate the fine line between freedom of expression and the prevention of harm."

},

{

"type": "markdown",

"heading": "Last but not the least",

"heading_level": "h2",

"content": "As we conclude this exploration into the development of an Indian LLM, it's imperative to address an equally complex and vital aspect: their evaluation[1]. Testing LLMs goes beyond just checking if they understand and speak the language; it's about ensuring that they actually are able to do the various real-world stuff they are expected to do i.e., whether they're practical for the varied everyday needs of people across India[1]. With the landscape of Indic LLMs steadily expanding, a unified mechanism for evaluating them is crucial, one that reflects real-life use and cultural understanding more than existing benchmarks (IndicXtreme, IndicNLG, etc) do[1].\n\n(Pssst!! Stay tuned for more insights on this in our upcoming blog. There's a lot more to explore, and we're just getting started!!)"

},

{

"type": "markdown",

"heading": "Conclusion",

"heading_level": "h2",

"content": "The creation of LLMs specifically for India's many languages and cultures is like embarking on a big and bold adventure. It's a step toward making sure everyone in India, with its rich mix of languages and cultures, can use and benefit from the latest technology. Making these models truly understand and generate content in all of India's languages is a tough challenge. Despite this, there's a strong sense of hope. By efforts like the IndicLLMSuite—we're laying down strong foundations of creating a model that doesn't just speak multiple languages but understands the cultural nuances of India.\n\nThe road ahead is filled with obstacles, but also with possibilities. Building an Indian LLM is not just a technical challenge; it's a mission to include everyone in the digital age. This vision lights up the path toward a future where technology truly serves and enriches the diverse and vibrant fabric of India."

}

],

"team": null,

"bibtex": "",

"page_url": "indicllm-suite"

},

{

"id": 28,

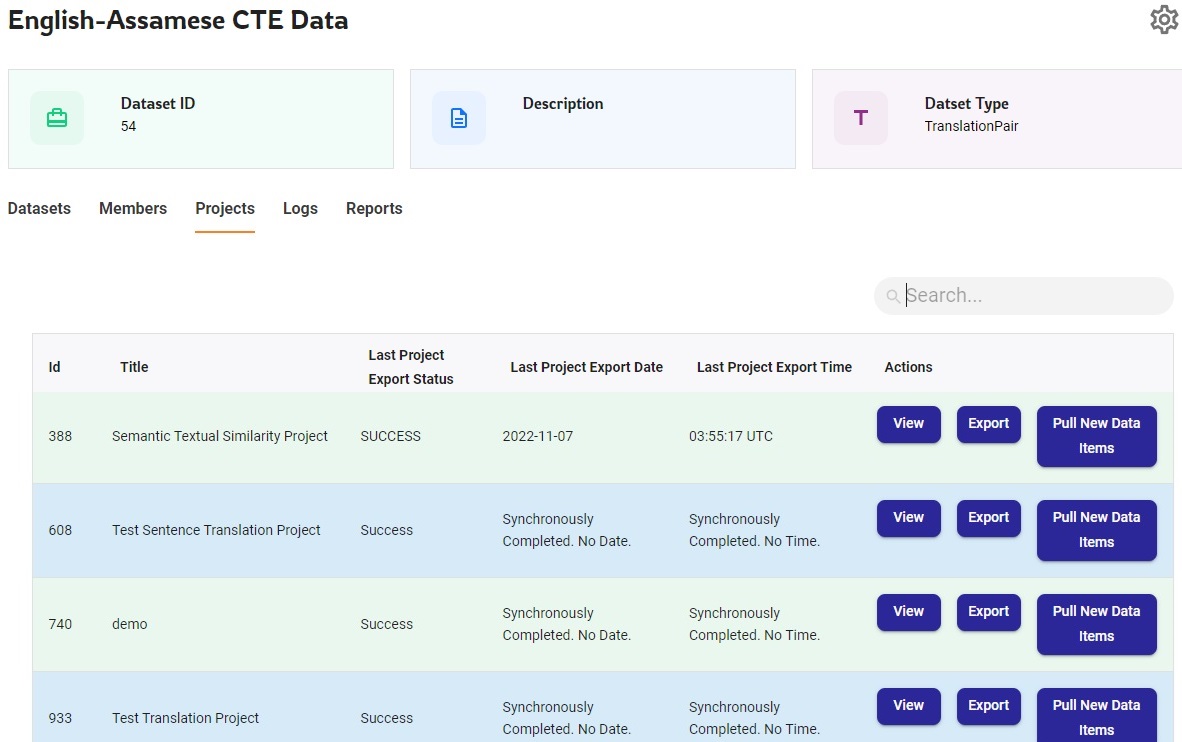

"title": "Shoonya - AI4Bharat’s AI-powered Data Annotation Platform",

"description": "Shoonya is an open-source AI-powered data annotation platform with an integrated workforce management system, that has been built with a vision to enhance the digital presence of Indian languages. At AI4Bharat, a large team of language experts annotate the data in all the 22 official languages of India on Shoonya, which is then used for creating open-source dataset repositories, which, in turn, are used for training open-source machine learning models being developed by AI4Bharat. Shoonya currently supports contextual verification and translation of sentences and transcription of audio and OCR files.",

"published_on": "2024-02-05",

"image": "https://admin.models.ai4bharat.org/media/images/Shoonya%20-%20AI4Bharat%E2%80%99s%20AI-powered%20Data%20Annotation%20Platform/shoonya_5.png",

"related_link": null,

"markdown_content": "",

"cover_image": null,

"authors": [

{

"name": "A Aparna"

},

{

"name": "Ishvinder Sethi"

}

],

"affiliations": null,

"publication_links": [

{

"text": "GitHub",

"url": "https://github.com/AI4Bharat/Shoonya",

"icon": "github"

},

{

"text": "Demo Video",

"url": "https://www.youtube.com/watch?v=vkjCmCWejMM",

"icon": "youtube"

}

],

"sections": [

{

"type": "markdown",

"heading": "Introduction",

"heading_level": "h1",

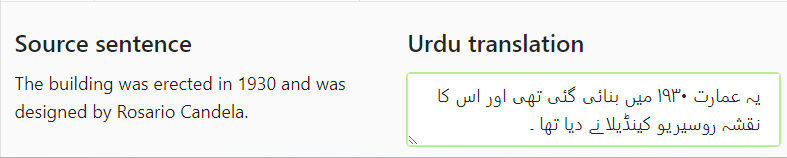

"content": "Shoonya is an open-source AI-powered data annotation platform with an integrated workforce management system, that has been built with a vision to enhance the digital presence of Indian languages. At AI4Bharat, a large team of language experts annotate the data in all the 22 official languages of India on Shoonya, which is then used for creating open-source dataset repositories, which, in turn, are used for training open-source machine learning models being developed by AI4Bharat. Shoonya currently supports contextual verification and translation of sentences and transcription of audio and OCR files.\n\nA highlight of Shoonya is that it has been built incorporating the invaluable feedback of hundreds of language experts using the platform real-time. This has resulted in ensuring that the platform is scalable, handling the huge number of simultaneous request loads.\n\n"

},

{

"type": "markdown",

"heading": "The Technology Behind It",

"heading_level": "h2",

"content": "Shoonya is built with Material UI in the frontend and Django REST Framework in the backend. It uses PostgreSQL as the database and integrates the open-source data labeling platform, [Label Studio](https://labelstud.io/) for building its annotation system.\n\nLeveraging open-source models ([IndicASR](https://ai4bharat.iitm.ac.in/areas/asr) and [IndicTrans](https://ai4bharat.iitm.ac.in/areas/nmt)) developed by AI4Bharat, Shoonya can auto-generate transcription for audio files and translation for sentences. AI4Bharat's open-source transliteration model ([IndicXlit](https://ai4bharat.iitm.ac.in/areas/xlit)) is used for typing in Indian languages. Support for 'right-to-left' typing for languages like Urdu is also available.\n\n\n\nAI4Bharat's open-source [Indic Glossary](https://glossary.ai4bharat.org/) is also integrated with Shoonya to help the language experts with translations at word-level."

},

{

"type": "markdown",

"heading": "The Hierarchical Categorization of Data & People",

"heading_level": "h2",

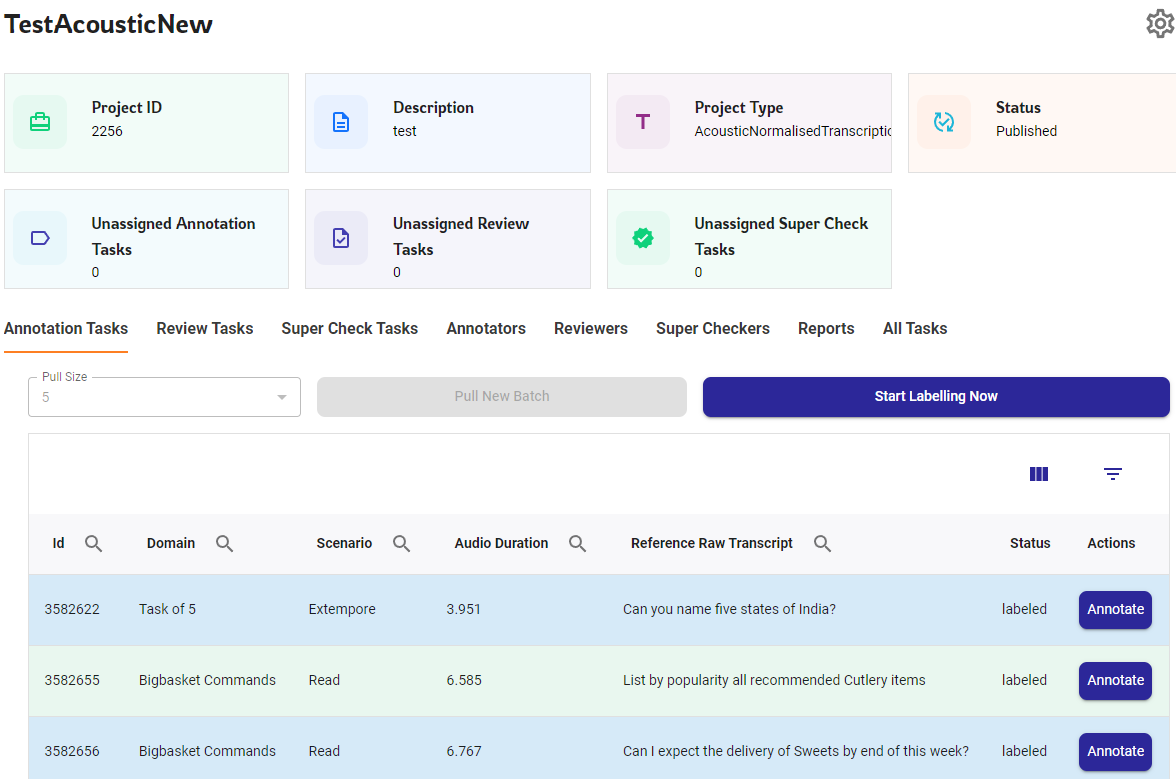

"content": "Making collaboration as well as management easier is the hierarchical arrangement in Shoonya comprising Organization, Workspaces, Projects and, at the granular level, tasks (the data item to be annotated) and its annotations, with each module having its own pre-defined permission and access control. This hierarchy lets more than one organization exist on the same platform, with the data of each organization restricted to itself.\n\nEven an annotation goes through a hierarchical quality check, with a data item first annotated, then reviewed and finally super-checked within Shoonya itself. This multi-layered annotation, review and supercheck workflow for a task ensures that the final data is of high quality.\n\nEven the source sentences to be translated first goes through the verification stage and only those sentences identified as being clean and grammatically correct are translated. The users can skip annotating any task which they feel does not meet the quality requirements."

},

{

"type": "markdown",

"heading": "Workforce Management System",

"heading_level": "h2",

"content": "The Organization, Workspace and Project has various user roles associated with it, each following its own hierarchy, with each role having the authority to perform all the activities allowed for the roles below that level. This helps in easier workforce management.\n\nEvery organization using Shoonya has its own organization database created for it. Each organization will have a set of workspaces, which offer a flexibility to categorize projects on the basis of language, annotation type, domain, the annotator team, etc. The users are given the option to belong to multiple workspaces.\n\nEvery workspace has its annotation tasks being grouped into different projects, with each project belonging to a specific language and an annotation type like translation, transcription, etc. Every project has its own set of language experts. A multilinguist has the flexibility to work on multiple languages. The actual data annotation work happens within a project. With multi-layered quality checks being supported, there are separate user roles to cater to these within a project.\n\n"

},

{

"type": "markdown",

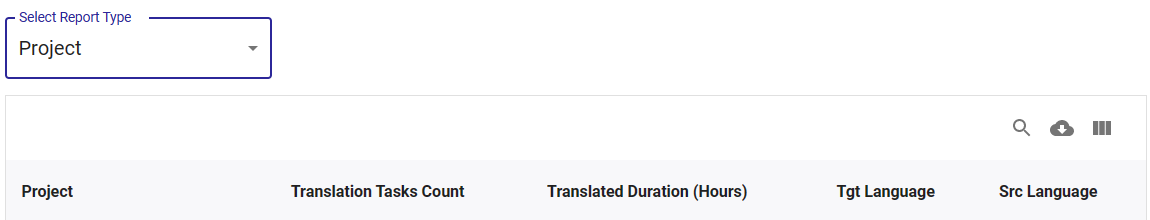

"heading": "Enabling Performance & Progress Tracking through Reports",

"heading_level": "h2",

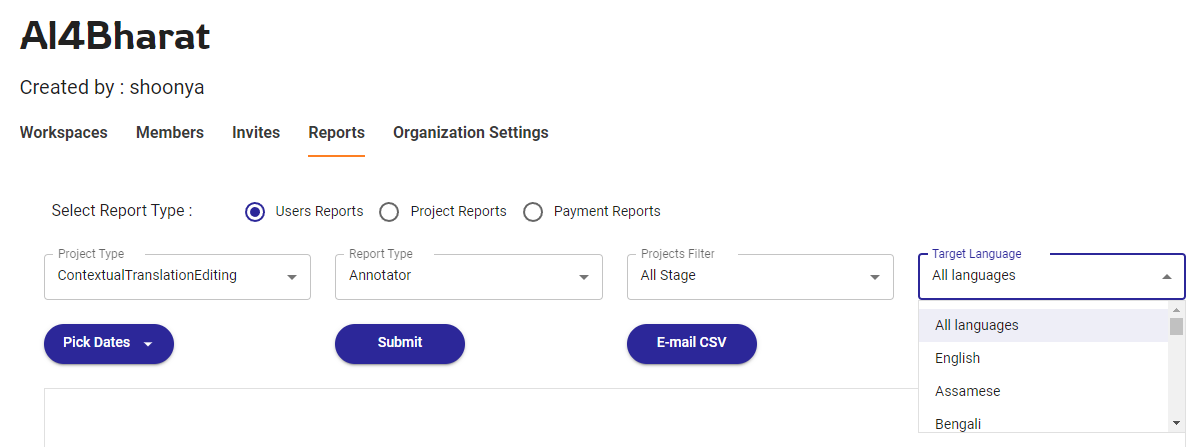

"content": "Shoonya provides both user-based and project-based reports at all levels of the organization structure. This helps the users of all users to keep a track of the project progress as well as the user performance. These reports can be filtered based on language or the quality-check level. Users also have the option to have the reports and their daily progress sent over an email.\n\nThe reports provide all the statistics related to the tasks either at the user-level or project-level as selected - the number of tasks and the different statuses the task and its annotations are in, the date when they were completed and the number of times a task failed a quality check. Other metrics related to the task like the word count, sentence count and average word error rate (for text-based annotations) and the transcribed hours (for audio-based annotations) are also shown.\n\n"

},

[

{

"type": "markdown",

"heading": "Data Management System",

"heading_level": "h2",

"content": "Shoonya has a 'Datasets' module which has different dataset instances representing each type of data that can be annotated on it. Each dataset instance will have multiple datasets of its type and each dataset will have data items to be annotated."

},

{

"type": "markdown",

"heading": "Dataset Instances",

"heading_level": "h3",

"content": "These are the dataset instances currently supported in Shoonya:\n\n**Sentence Text** - A sentence, its context, its language, domain and quality check status and human-corrected version of the sentence\n\n**Translation Pair** - A source sentence, its context, its source & target languages, its machine translation & human-verified output translation, labse score & rating\n\n**Conversations (text)** - A conversation in text format and its metadata, quality check status, its source & target languages, its machine translation and human-verified output translation\n\n**Speech Conversations (Audio)** - an audio file and its metadata, its language, machine-generated transcript and human-verified transcript\n\n**OCR Documents (Images)** - an image file and its metadata, its language, machine-generated OCR prediction & human-verified transcript"

}

],

{

"type": "markdown",

"heading": "Datasets and Projects Mapping",

"heading_level": "h3",

"content": "There is a separate project type in Shoonya for every type of annotation that can be done on a dataset instance. Each project belongs to a specific project type and language and uses the data items from a dataset. For example, a contextual translation editing project will use the 'Translation pair' dataset instance.\n\n\n\nEach project will have a set of tasks, with each task representing each data item from that dataset that has to be annotated. For example, a sentence translation project task will have a source sentence that has to be translated and its output translation will initially be blank.\n\nOnce the user completes the annotation of a task, its final quality-checked annotation version is exported back to the source dataset. For example, the output translation entered by the user for a task will be copied as the output translation of the corresponding data item of the dataset."

},

{

"type": "markdown",

"heading": "Data Management Features",

"heading_level": "h3",

"content": "Shoonya offers a user-friendly interface to handle all the data processing work from the platform itself, without the need to access the backend. There is a user interface for everything from creating new dataset instances and uploading/downloading datasets, creating and downloading projects and the various reports associated with it and also accessing the error logs."

},

{

"type": "markdown",

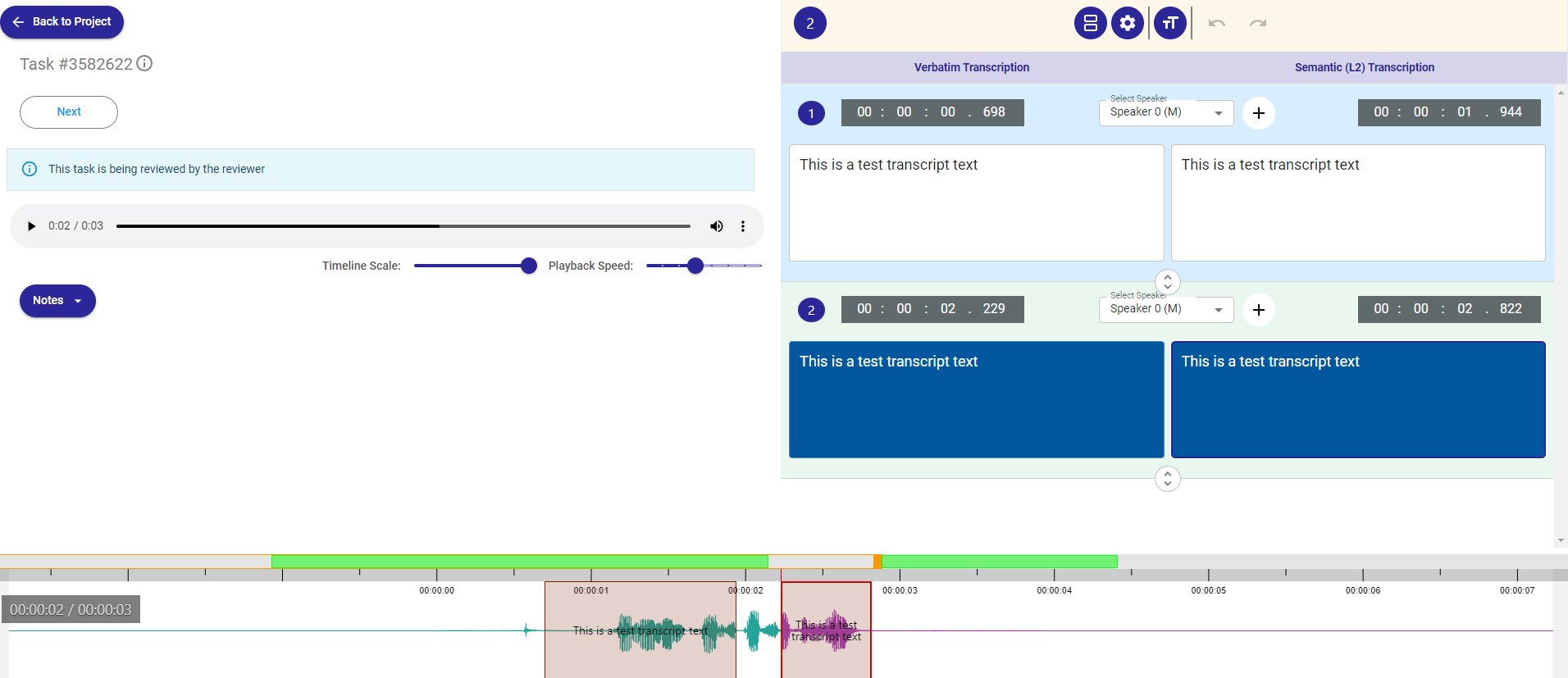

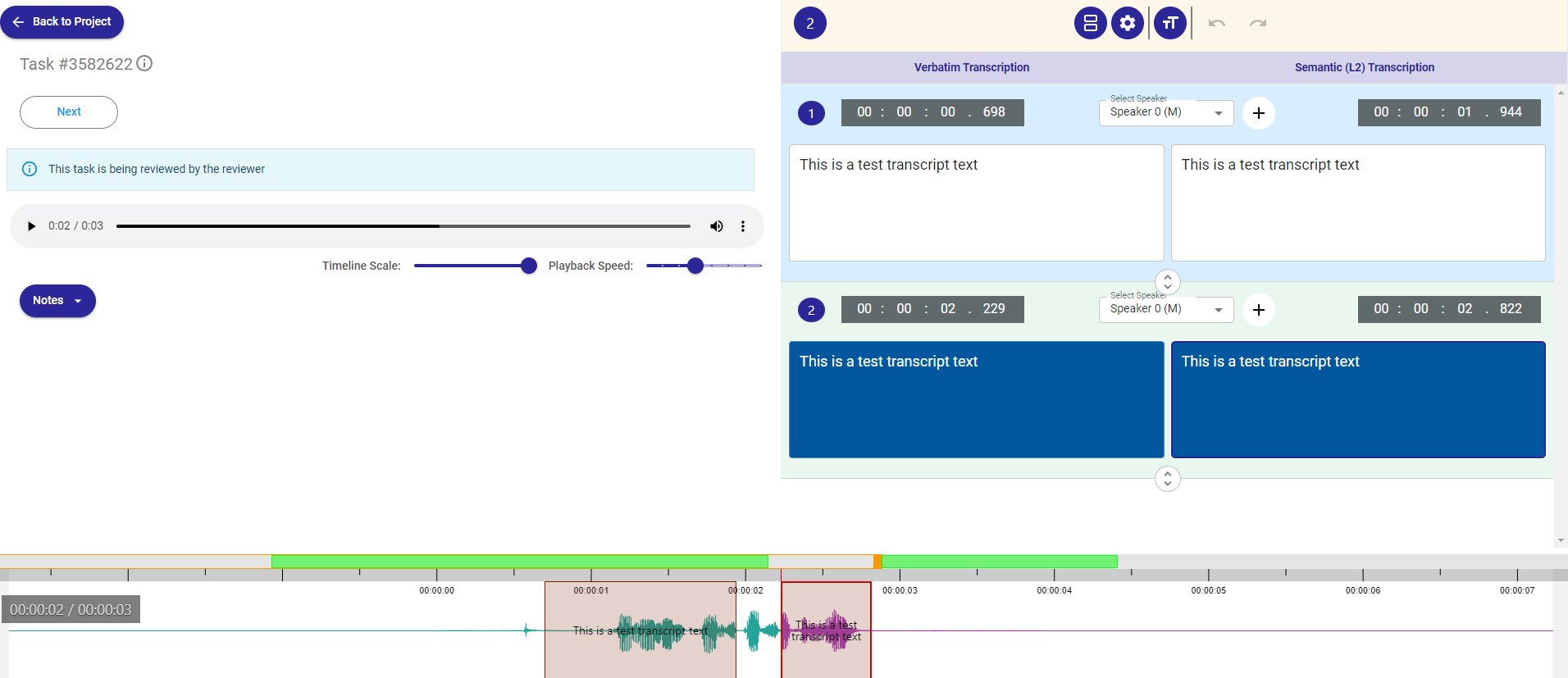

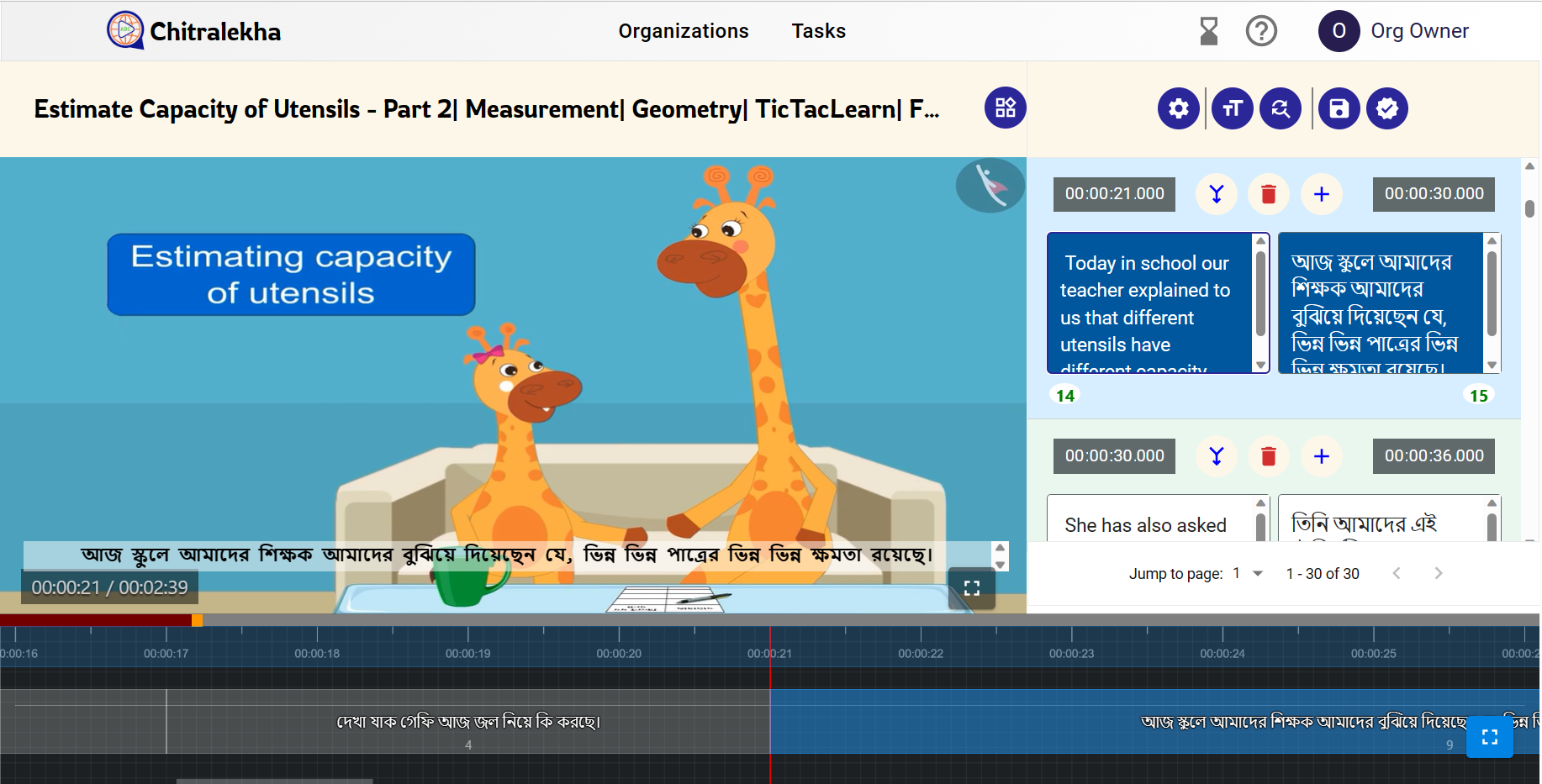

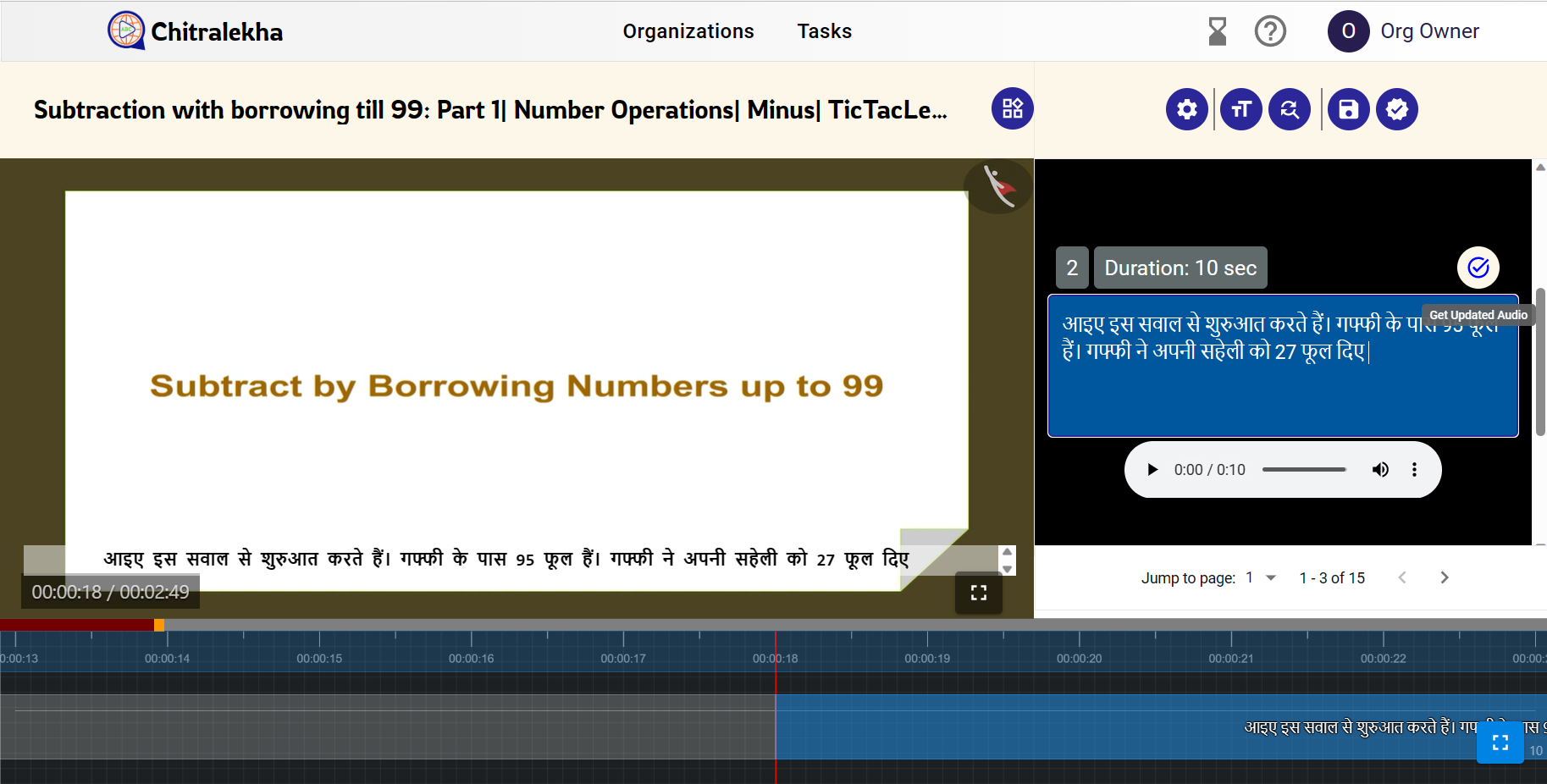

"heading": "The Annotation System",

"heading_level": "h2",

"content": "Every task of a project has a status and it changes through the lifecycle of a project depending on the transitions its annotation(s) go through. Each task has separate versions of annotations belonging to the annotator, reviewer and the superchecker. But, a task has only one quality-checked version marked as its correct annotation.\n\nEach annotation has an annotation status linked to it. This, again, indicates whether an annotation is yet to be annotated or is in progress or has been skipped by the user or has been rejected by the reviewer/superchecker or has finally been completed."

},

{

"type": "markdown",

"heading": "The Annotation Interface",

"heading_level": "h3",

"content": "Every project type has its unique annotation interface with the user shown the data to be annotated, its context, its machine-generated annotation along with other metadata like, say speaker details for an audio. The users can enter their own verified output annotation in the given text areas. The users can put the annotation into different statuses here.\n\n"

},

{

"type": "markdown",

"heading": "The Annotation Interface",

"heading_level": "h3",

"content": "Every project type has its unique annotation interface with the user shown the data to be annotated, its context, its machine-generated annotation along with other metadata like, say speaker details for an audio. The users can enter their own verified output annotation in the given text areas. The users can put the annotation into different statuses here.\n\n"

},

{

"type": "markdown",

"heading": "The Annotator-friendly Features",

"heading_level": "h3",

"content": "The users can **manage their assigned task count** by choosing the number of tasks to be assigned and deallocating their tasks at any point of time.\n\nShoonya provides **machine-generated annotations and in-built transliteration and glossary features**, which significantly reduces the time taken by a user to complete a task.\n\nShoonya has an **in-built annotation feedback mechanism** in which the users can enter optional notes for each annotation in the annotation page itself. This facilitates easy sharing of feedback between annotators, reviewers and supercheckers.\n\nShoonya lets the user track their own work across all their projects through the **user's progress reports**."

},

{

"type": "markdown",

"heading": "Conclusion",

"heading_level": "h2",

"content": "For any organization passionate about creating, digitizing and annotating content in Indian languages, thereby bridging the divide between the Indian language speaking community and the advancing AI technologies, Shoonya is the open-source platform to turn to."

}

],

"team": null,

"bibtex": "",

"page_url": "shoonya"

},

{

"id": 30,

"title": "IndicTrans2-M2M",

"description": "In May 2023, we released IndicTrans2 models, which were the first models to facilitate translations between all 22 scheduled Indic languages and English. This initiative aligns with our overarching vision to deliver open-source models of superior quality competitive with commercial systems. As a part of our endeavor, we strive to continually keep working on improving the accessibility and democratization of our models.",

"published_on": "2023-12-03",

"image": "https://admin.models.ai4bharat.org/media/images/IndicTrans2-M2M/indictrans_2.png",

"related_link": null,

"markdown_content": "",

"cover_image": null,

"authors": [

{

"name": "Jay Gala"

},

{

"name": "Pranjal A. Chitale"

},

{

"name": "Raghavan AK"

},

{

"name": "Varun Gumma"

},

{

"name": "Sumanth Doddapaneni"

},

{

"name": "Aswanth Kumar"

},

{

"name": "Janki Nawale"

},

{

"name": "Anupama Sujatha"

},

{

"name": "Ratish Puduppully"

},

{

"name": "Vivek Raghavan"

},

{

"name": "Pratyush Kumar"

},

{

"name": "Mitesh M. Khapra"

},

{

"name": "Raj Dabre"

},

{

"name": "Anoop Kunchukuttan"

}

],

"affiliations": null,

"publication_links": [

{

"text": "arxiv",

"url": "https://arxiv.org/abs/2305.16307",

"icon": "arxiv"

},

{

"text": "github",

"url": "https://github.com/AI4Bharat/IndicTrans2",

"icon": "github"

},

{

"text": "hugging face",

"url": "https://huggingface.co/collections/ai4bharat/indictrans2-664ccb91d23bbae0d681c3ca",

"icon": "huggingface"

}

],

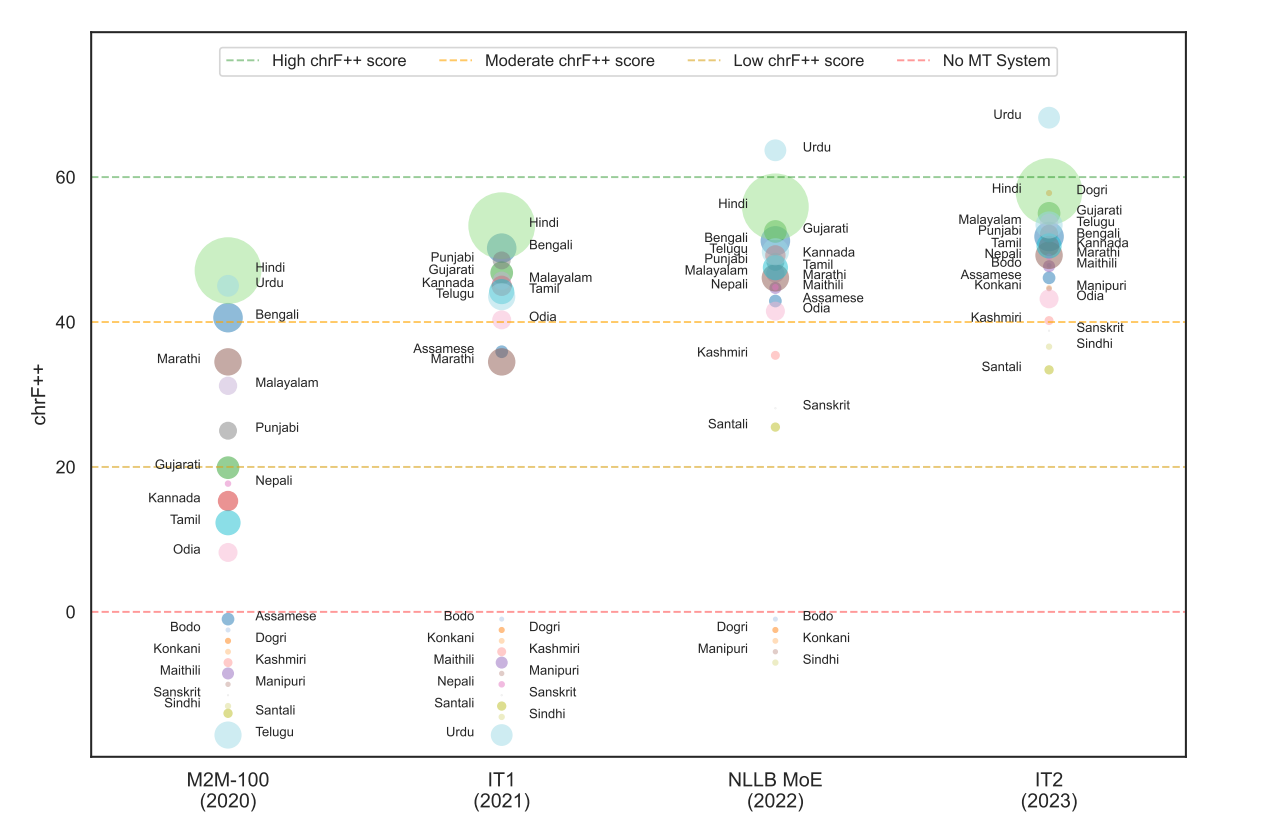

"sections": [

{

"type": "markdown",

"heading": "IndicTrans2-M2M: Indic to Indic Machine Translation Systems Supporting Translation Between all 22 Scheduled Languages.",

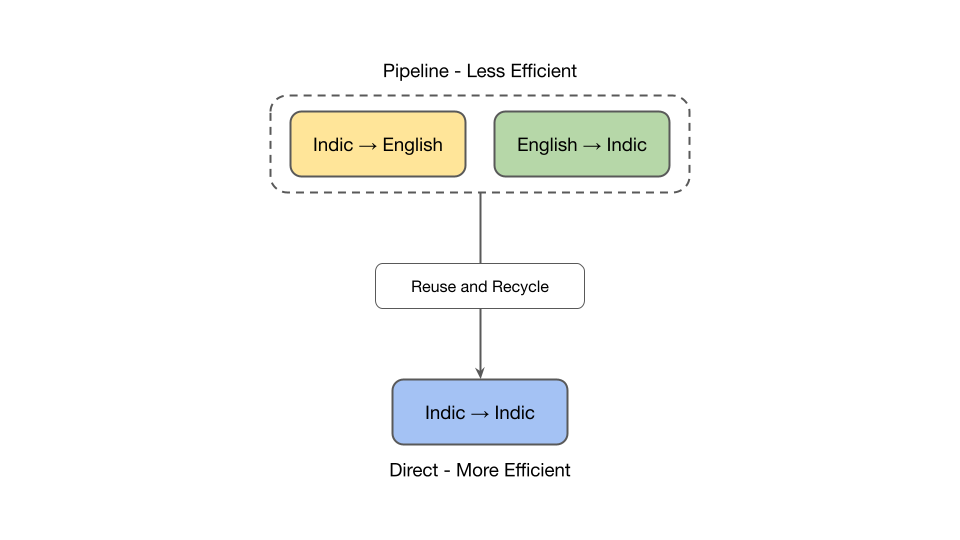

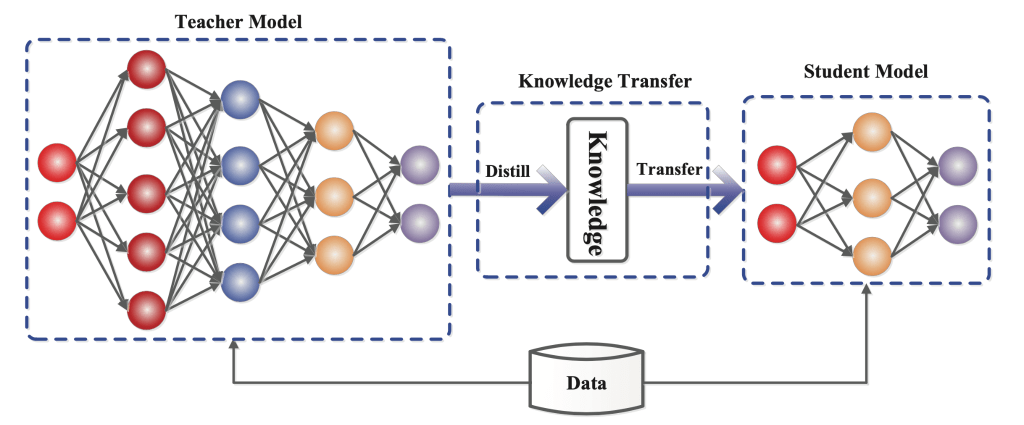

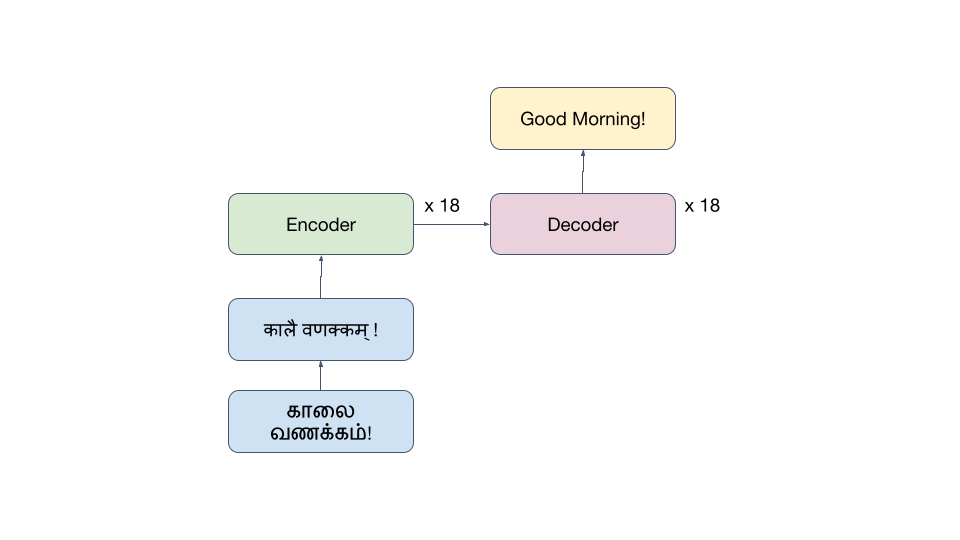

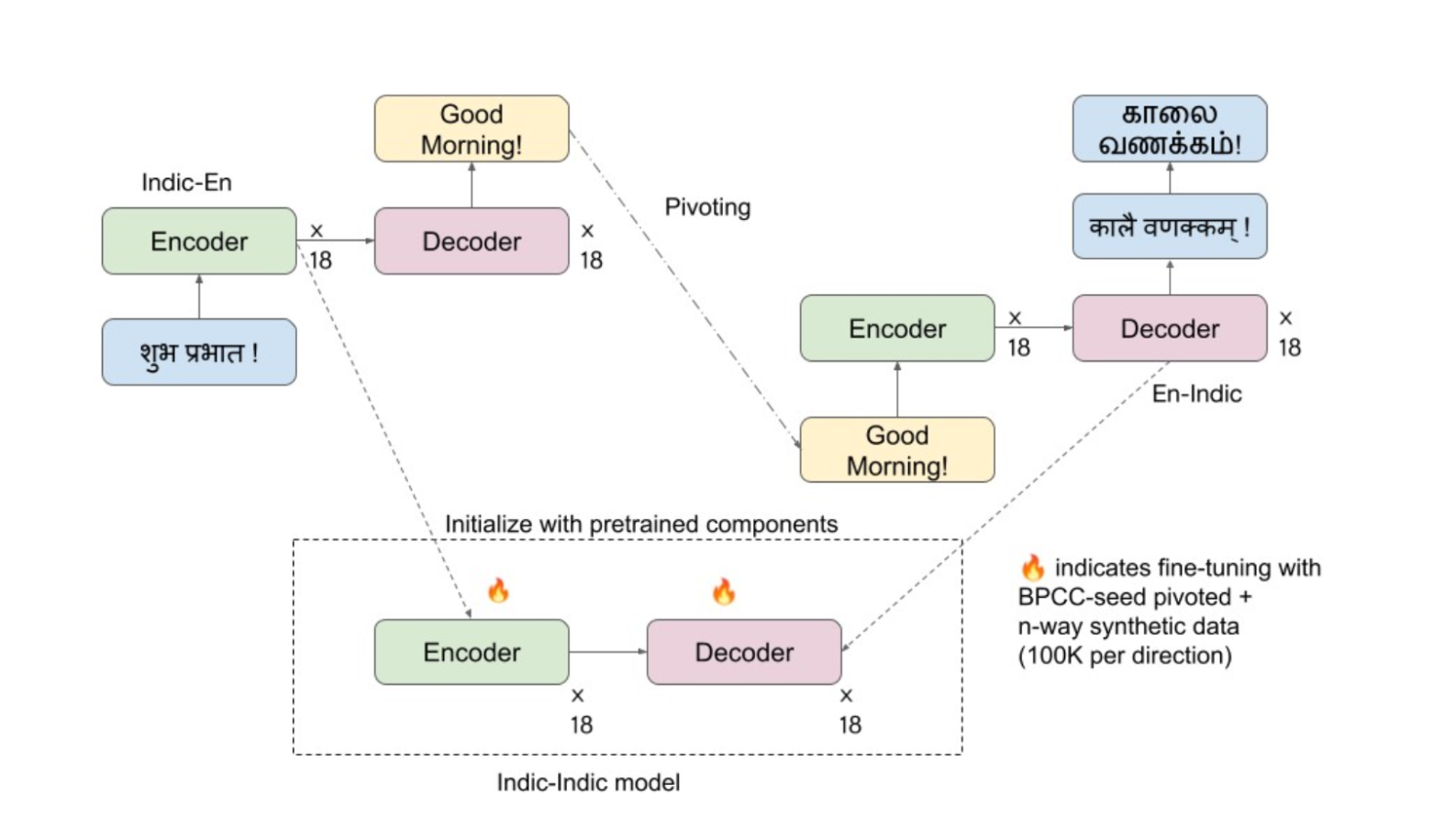

"heading_level": "h1",